Advanced Operating Systems

- http://www.cs.unm.edu/~darnold/classes/cs587-f09/

- Office Hours: after class

Table of Contents

- Meta

- class notes

- 2009-08-25 Tue

- 2009-08-27 Thu

- 2009-09-01 Tue

- 2009-09-03 Thu

- 2009-09-08 Tue

- 2009-09-10 Thu

- 2009-09-17 Thu

- 2009-09-22 Tue

- 2009-09-24 Thu

- 2009-09-29 Tue

- 2009-10-01 Thu

- 2009-10-06 Tue

- 2009-10-08 Thu

- 2009-10-20 Tue

- 2009-10-22 Thu

- 2009-10-27 Tue

- 2009-10-29 Thu

- 2009-11-03 Tue

- 2009-11-05 Thu

- 2009-11-10 Tue

- 2009-11-12 Thu

- 2009-12-01 Tue

- 2009-12-03 Thu

- reading notes

- Map / Reduce

- peer-to-peer

- Amoeba vs. Sprite

- Network Services

- QuickSilver

- Munin DSM

- CODA

- RAID

- NFS network file system

- log file system

- fast file system

- VM in Multics

- VM in Vax

- lottery scheduling

- resource containers

- Scheduler activations

- Monitors (2)

- Virtualization

- Exokernel

- µ-kernels

- Unix Time Sharing System

- Observations on the Development of an Operating System

- Hints for Computer System Design

- project

- exams [1/3]

- question

- concepts / terms

- overview

Meta

Grade Breakdown

| 10 | participation |

| 25 | homework |

| 25 | project |

| 22.5 | midterm |

| 22.5 | final |

class notes

2009-08-25 Tue

- same course path as undergraduate OS, just much more detail

- all reading in research papers

-

groups

- all reading

- all work

- one day's lecture

-

research-lite project

- proposal in ~3rd weak of Sept.

- 1-2 month working on implementation

- will produce a research-paper

- undergrad lectures serve as good background for the course

2009-08-27 Thu

email server was down, try again or send email to Dorian

concepts

overview

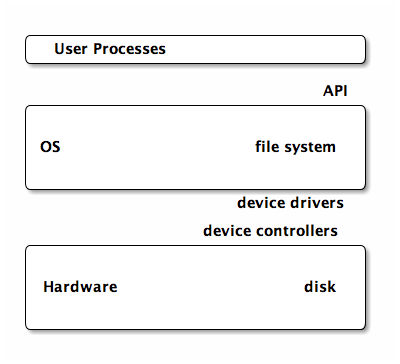

- OS

-

software providing access to hardware (cpu, memory, disk, IO)

- policies

- what user can do

- mechanisms

- how policies enforced

- permission levels

-

often controlled by indicator bit

- user

- kernel

- system call

-

allows access to kernel functions from user mode

- syscall is made

- parameters stored in registers

- switch to kernel mode

- execute routine defined in the kernel

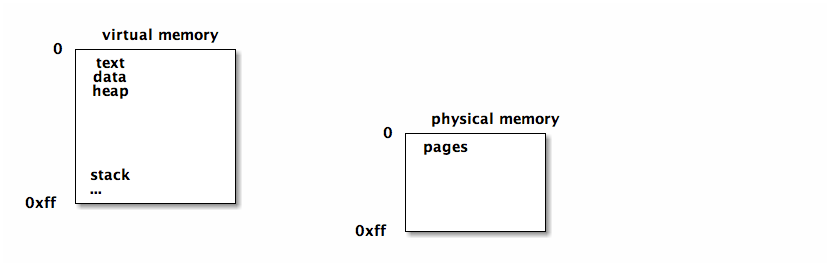

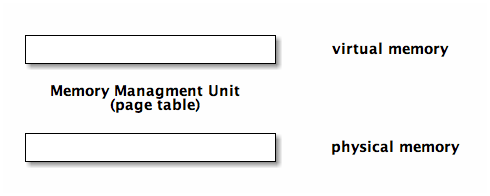

virtual memory

virtual address space which can be mapped to actual memory. this allows the process using the memory to be loaded/unloaded/moved etc…

if a page of virtual memory is not in physical memory a page fault occurs and the page is loaded into physical memory

working set / footprint

of a process is the parts of it's address space currently in use. this is the pages of memory that need to be loaded in physical memory to avoid memory.

design goals

- efficiency

- often a tradeoff between time and space

- robustness

-

fulfills expectation of users

- security

- hardware interface

- expose features/capabilities of hardware

- user interface

- present features/capabilities to user

- portability

- target hardware

- economics

- development cost, user base

- scalability

- range of supported hardware/user sizes/numbers

- extensibility

- ability to support new components

papers

keep in mind the context

- users back then were developers

- cpu used to be bottleneck now it's memory

increasing gap between CPU speed and the ability of memory and bandwidth to keep up.

- bandwidth is proving the limit on the amount of memory which can be used efficiently

observations

hints

2009-09-01 Tue

Stephens Richie and Thompson developed unix and TCP/IP

-

Stephens

- part of the unix team

- wrote the unix bible

2009-09-03 Thu

- uni-programming

- only one process at a time, typically it would run to completion

- batch-programming

- still uni-programming but you maintain a queue of processes that are ready to run

- multi-programming

- allows multiple processes to run "simultaneously" on the machine using preemption, time slicing and by utilizing different hardware components in parallel

- time sharing

- multi-programming with multiple users creating processes. these days, tend to make the most sense for large batch processes, rather than interactive use

Mechanisms for multi-programming

- context switch

- switching processes on a CPU

- process table

- maintained by the OS, this contains an entry including a process control block for each process currently running on the system

- process control block

- contains the PID, the files the process is using, the program counter, register values, a pointer to the image

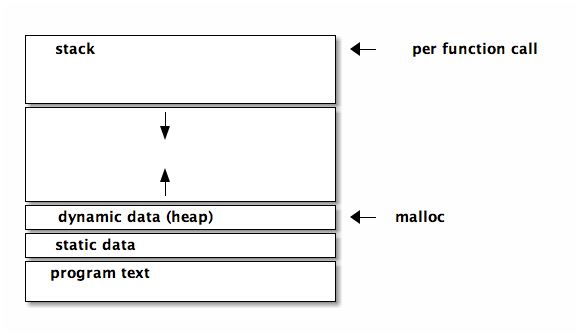

- image

- when you are about to run a process you load that process which creates an image of the process and bring it into memory. image+state=process. program -> image -> process

file systems

- file

- in general to the OS a file is just an uninterpreted raw ordered set of bytes, some specialized OSs do differentiate between file types for optimization.

- directory

- list of files, most OSs limit access to these files to system calls

mechanisms

- filename relates to an index # which points to the index table which relates the index # to an i-node

- when mounting a new disk the first couple of bites on the disk contain the information used by the OS to populate the index table

- generally corrupt disks are the results of damage to this meta data section on the front of the disk

links

- hard link

- the contents of the file is a pointer to the i-node of the file

- soft link

- the contents of the file is the name of the file to which it is pointing

deleting a file

- the actual data isn't "erased", rather the link counter in the i-node is decremented, and if there are no more links, then the blocks on which the file is written are added to the free list

- deleting a soft link doesn't change it's target's i-node

2009-09-08 Tue

- processes

- unit of work user gives to the OS

- thread

- finer unit of work inside the process

processes

schedulers

- batch queue

- outside the OS, waiting jobs

- short term scheduler

-

many different scheduling policies

- round robin

- priority

- shortest-first

scheduling

scheduling policy

- dispatcher

- implements the scheduler policy

- goals

-

- timing

-

- responsiveness

- time to first response

- waiting

- total time spent waiting

- turnaround

- time start to finish

- resource utilization

- users don't really care about this

policies

- fifo

- first in first out

- round robin

- move around giving everyone time slices

- shortest job first

- theoretical, not in real life

- priority

- not a complete policy, combined with fifo or round robin etc…

- multi-level queues

- semantics added to queues (i.e. system, interactive, batch, IO-bound, etc…)

- multi-level feedback queues

- jobs can change priority over time, based on things like increasing the priority of long-waiting jobs to avoid starvation

threads

multiple processes sharing an address space

each thread would have it's own

- UID

- stack

- registers

- defining handling signals like C-c, segmentation violations, etc…

everything else is shared (text, static data, dynamic data)

threading schema

- many-to-one (user-level threads)

-

don't really speed up execution,

but could help to modularize a program

- if one thread blocks for IO, all threads are blocked

- thread operations (creation, deletion) are performed in user-space which makes them faster than having to do them in kernel space

- one-to-one (kernel threads)

-

- real parallelism

- less portable

- takes longer to create a thread

- real parallel execution

- many-to-many (hybrid)

-

- static

- static mapping between user-threads and kernel-threads

- dynamic

- thread mappings can be changed

- pool

- you can establish a pool of kernel threads, then do all further thread operations in user-space (faster)

- limit

- you can limit the number of kernel threads to something reasonable (number of processors) reducing overhead on the OS and times jumping the user|kernel boundaries

- complicated

- this is the most complex of the threading schemas

- fixed

- most often you have a fixed number of kernel threads

inter process communication

through sharing or message passing. each of these could be implemented in terms of the other

in both cases

- synchronous == blocking - asynchronous == non-blocking

message passing

- µ-kernels can be though of as using message passing

- messages typically have to pass through kernels

- can cross machine boundaries

shared memory

- monolithic kernels can be thought of as using shared memories

- typically faster than message passing

- requires shared physical media

-

when two processes try to access a variable at the

same time

- i read

- you read

- i process and write

- you process and write

- we've both missed the other's changes

- atomic operations, locks, semaphores (binary, counting), etc…

2009-09-10 Thu

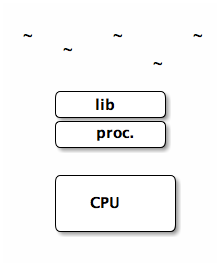

talking about µ-kernels.

address space == process

L4 kernel has hierarchical address space

- every process inherits address space from a parent, and the initial address space (sigma-0) maps directly to physical memory

-

which is like monolithic unix where everything descends from the

initprocess. - manages address spaces

paging vs. contiguous allocation

- contiguous allocation

- has a base and offset registers which are used to map the virtual addresses to the physical disk

- paging

-

pages of memory map randomly (not contiguous) on disk

- more complicated translation from virtual to physical addresses

- allows you to fill holes one disk (finer granularity because physical memory is eaten in page-size chunks rather than address-space chunks)

- allows portions of the address to be loaded individually as opposed to contiguous allocation where the entire address space must be loaded before any execution can take place

1st v.s. 2nd generation µ-kernels

- second tailored more to hardware

- second built from scratch (rather than back from monolithic kernels)

interrupts (µ-kernel is slower)

main reason µ-kernels are slower is because every interaction is translated through IPC which has to go through the table

- monolithic kernel

- hardware interrupts in a monolithic kernel are directly looked up in a register table

- µ-kernel

- one thread waiting for each potential interrupt source

top-half / bottom-half interrupts (in linux)

tradeoff between speed of handling interrupts, and need to do significant amount of processing in many cases

- top-half

-

responds quickly and does what needs to be done

immediately

- for example it will just record that the interrupt occurred

- has high priority and can interrupt other interrupt handlers

- setup

- bottom-half

-

does the actual bulk of the work

- has lower priority

- service

system call mechanisms

.soshared object- can be shared across multiple address spaces

.astatic shared code- statically linked (shared) at compile time

- trampolines

- jumps the code execution to somewhere else, then jumps back

scheduling

in L4Linux the normal linux scheduler is used much like a many-to-one thread scheduler. Maps all of the linux "user-threads" to a single kernel thread.

the L4 kernel is scheduled using hard priority round robin

L4 scheduling priority levels:

- top-half interrupt handler

- bottom-half interrupt handler

- kernel (which is the linux server)

- user

translation look-aside buffer (TLB)

Fast associative memory that helps in address translation

It maps a virtual address to a physical address, if you hit in the TLB, then you don't have to look up the page.

- tagged TLB

- like a TLB plus information as to which process the address belongs to

in a normal TLB you have to flush all entries in the TLB to clear old mappings, in a tagged TLB you don't need to flush the TLB on context switch. this saves time which quickly switching between processes and back.

dual space mistake

tried to facilitate speedy kernel <-> user IPC through shared memory

- space costs (doubling memory usage)

- synchronization costs (takes time)

co-location

allows multiple processes to all have access to kernel memory, like threads

2009-09-17 Thu

Project Ideas

-

System monitoring stuffs

- DynInst

- KernInst

- PIN

- PAPI (Performance API)

-

massively parallel stuffs

- map-reduce

- hadoop, w/PIG

- MRNet large scale group operations

- RPC

- XML-RPC

- task-farming (programming model, issue tasks to the farm and collect results, example SETI-at-home)

-

threads

- end-to-end threading model

- deadlock prevention

-

file systems

- encrypted

- process hijacking

- project HAIL

- FUSE (MAC-FUSE)

- Amazon Dynamo

exokernels

secure bindings

pain to write to

the spirit of the exokernel is that you would normally use abstractions exported by the library OS rather than always having to use your own

µ-kernel vs. exokernel vs. monolithic-kernel

-

Monolithic kernel

+------------------------+ | S1 S2 S3 | | | Monolithic kernel | S4 S5 | +------------------------+ ^ App -

µ-kernel

+------+ |App | +------+ | |-\ | App | +------+ -\ | | +-------------+ +-/----+ | | /- | | /- | u-kernel |-- +---/-----\---+ / -\ /- --\ / +---------+ +--/-----+ | S2 | |S1 | | | | | +---------+ +--------+ -

exokernel

+----+ +----+ +----+ |App | |App | |LOs | +----+ +----+ +----+ +-------------------+ | exokernel | +-------------------+ | Hardware | +-------------------+

downside (cooperation)

when each application has direct access to the hardware it becomes difficult for applications to cooperate (intelligently share resources), which is routinely done in standard kernels.

2009-09-22 Tue

monitors

(see ../cse451/notes/2007-10-17)

software construct which provides for mutual exclusion around a resource. maintains the invariant that when entering a monitor there is no-one else inside of the monitor.

- condition variables

-

allow communication between processes

avoiding spinning (

while(condition !true);)-

signal()alerts other processes -

wait()sleep (relinquish cpu) and wait to be signaled

-

- semaphore

-

semaphores are effectively equivalent to condition

variables

-

sem.p()waits on the semaphore -

sem.v()signals those waiting on the semaphore

-

Synchronization Problems

Mesa Monitors

Mesa

-

programs comprised of modules

-

clear API boundary between modules

- public interface

- private procedures

-

clear API boundary between modules

Mesa Monitors

-

monitor module

- entry procedures (publicly interface)

- internal procedures (private procedures)

- external procedures (procedures that require no locking)

Issues

-

when in a monitor (in function

foo), and you call a functionbarin another module then during the execution of that function you are not in the monitor (barhas no access to the structures of the monitor while as it is another module)-

if you don't release the lock when moving into

bar-

you have the risk that something in

bartries to grab the resource protected by the monitor /deadlock/ -

you have to

unwindand open locks if say there is a deep exception

-

you have the risk that something in

-

if you do release the monitor while calling

baryou need to-

ensure that you get the monitor back after executing

bar -

potentially do cleanup before/after executing

bar

-

ensure that you get the monitor back after executing

-

if you don't release the lock when moving into

- this is tradeoff between simplicity (per class) and efficiency (per object), best option really depends on the use case. monitor per class is sort of a strawman

-

it is possible for lower-priority processes to

run in front of higher-priority processes.

- p1 acquires l1

- p2 preempts p1

- p3 preempts p2

- p3 acquires l1 (but can't because p1 has the lock)

the problem is that p2 will run in front of p3, because p1 can't run and release the lock until p2 has run to completion

- priority inheritance

- associate a priority with a resource (lock) and the priority of that lock is set to the highest priority of those processes waiting for/on the lock. the priority of the process inside the lock is set to the lock's priority.

Difference between Mesa and Hoare

-

in Hoare you are guaranteed that immediately upon signaling of a

condition variable the waiting process will receive control, however

in Mesa monitors the signal is more of a hint and you are not

guaranteed to receive control when a signal is sent.

-

Hoare

if(!cond){condition_v.wait()} -

Mesa (must re-check after condition becomes true)

while(!cond){timed_wait()}

-

Hoare

-

Mesa

- timeout

- abort

- broadcast vs. signal

- naked notify

-

allows hardware interrupt to signal a condition

variable without first acquiring the monitor lock. this is

more efficient than forcing a device driver to wait for a lock

to be released before accessing a monitor.

-

this could lead to a problem where a device signals that a

resource is free, but the notification is missed by a process

which is just switching from

!condtowait() - note that this only allows the hardware interrupt to signal the condition variable, not to actually touch the resource

-

this could lead to a problem where a device signals that a

resource is free, but the notification is missed by a process

which is just switching from

2009-09-24 Thu

scheduler activations

wherever their system does well they present the numbers in a table. when their system doesn't fare so well they embed the numbers in the prose.

- virtual processors to real processors

- how do virtual processors map to real processors

- SMMP

- shared memory multi-processors, many CPUs which all have access to a single big block of shared memory

- normal user-level threads

-

may not really be that much faster than

kernel level threads (at least not to the point that this paper

claims)

- these user-level threads

-

in this paper when they say user-level

threads they mean the following model

- kernel scheduling

- priority levels and equal access for each priority level

- lifo

- when there are not enough processors to run all threads, then they follow a lifo policy to take advantage of cache locality (if I was running recently then my cache is still around)

- critical section

-

need to be careful not to preempt a thread in a

critical section (or at least let it get back quickly)

when a guy in a critical section is preempted and other people are waiting for his lock (and they've pushed him down the lifo queue) then you could deadlock (as he can't get up the queue until they finish and they can't finish until he runs)

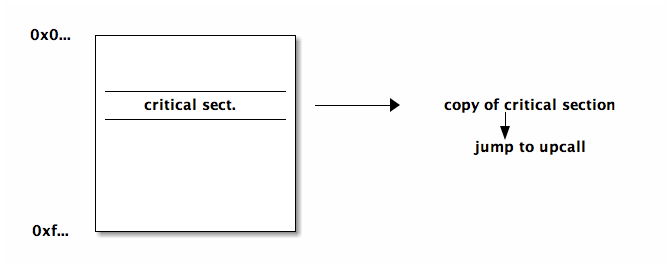

- solution

- make a copy of each critical section which ends in a jump to an upcall. when the kernel preempts a process the kernel checks if the code is in a critical section, and if so, it jumps to the copy which is guaranteed to jump to an upcall when the section completes

- spin lock

- burns CPU, but keeps a process on the ready list, good for short wait, or when you have processor to burn

- upcalls

-

used for the kernel to talk to the user process

- preemption

- adding procs

- blocking

- unblocking

- downcalls

-

when the user-space communicates to the kernel

- more procs

- less procs

2009-09-29 Tue

lottery scheduling

a proportional-share-scheduling system where each entry (waiting consumer/process) gets some number of tickets, and whenever a resource is to be consumed a lottery is held and the winner's ticket is taken and the winner is placed in control of the resource.

specifics

- actually help many lotteries at once forming a queue (rather than a lottery every time quanta)

- processes can give their tickets to other processes (i.e. client server model, client could give tickets to server)

- given to processes that release the CPU before their time quantum has expired

- more uniform stat distribution with more samples -> smaller quantum leads to more samples

-

tickets can be used for any resource

- memory management

- reverse lottery, when a page needs to be evicted from memory a lottery is held to select page to remove

resource containers

aimed at implementing a web server

relevant metrics for web server

-

client metrics

- response time

- throughput

-

server metrics

- number simultaneous clients

- quality of service, might want different levels for different clients

resource containers allow the application to specify resource containers and tell the kernel how to assign resources to the resource container.

mechanism of resource containers

- connection comes in and is wrapped in a resource container

- thread handling that connection is bound to the resource container

- additional resources (i.e. file descriptor) are bound to the resource container

this can be useful for handling malicious requests (i.e. if they're tagged as malicious on the way in they can be given little/no resources)

memory management

handling the speed/capacity tradeoffs of memory maintaining

- performance

- protection

- correctness

/\

/ \ |

speed ^ / \ capacity v

| / reg. \

/--------\

/ \

/ cache \

/--------------\

/ \

/ main memory \

/--------------------\

/ \

/ local disk \

/--------------------------\

/ \

/ cloud, remote disk, tape \

----------------------------------

relocation

addressing schemas (w/static relocation)

- source code

- symbolic representation of memory addresses

- compiled code

- relative refs (e.g. module x + offset)

- loaded code

- absolute addresses

so to change where the code is located in memory you will generally need to reload the code. dynamically relocatable code has it's absolute addresses resolved at runtime rather than at load time, so the code can be moved without reloading the code.

allocation

- contiguous allocation

-

simple (base, limit, attr). makes context

switches very simple (the kernel only need to change the base and

limit registers)

- external fragmentation

- may not have enough contiguous free space

- sharing

- can't share w/o sharing entire address space (no portions)

- setting attributes

- same as above, can't identify parts of the space

- segmentation allocations

-

divide address space into segments of

arbitrary size. segment number -> (base, limit, attr)

- external fragmentation

- because with variable length sizes there could be many free spaces which aren't big enough to be used

- paging

- (most popular) fixed size segmentation. this ensures that there is no external fragmentation (if there is any space available then it is page sized and can be used). this is still vulnerable to internal fragmentation

page table

2009-10-01 Thu

2009-10-06 Tue

disco (implementation & performance)

OS modifications

- drivers for DISCO specific "hardware"

- changes to keep OS from trying to access a small chunk of unmapped memory

- (small) allows the guest OS to request a 0'd page (so the guest OS doesn't have to re-0 a page)

- (disco) interprets the guest OS going into low power mode as the OS yielding the processor

virtual memory

Multics

- since segments are organized/structured as files they actually didn't have a file system. referencing a segment through it's symbolic name is like referencing a file

-

seg.tag | address | opcode | external | addressing-mode- seg. tag

- points to the base register of the owning base register

- external

- whether to use the segment tag (if external) or your own base register

-

address points to another address. happens

when you have multiple levels of paging hierarchy.

- indirect address points to 2 36-bit words, the new segment number and the new word number

-

reference to external program

- symbolic name -> module name

- symbolic address -> function name or variable name

- are added to each process to hold the lookup information for external segments. after an initial reference the number of the link in the linking segment is used for future references.

VAX

- VMS addressing

-

2-bit seg. | 21-bit page number | 9-bit offset- segments

-

system space, program region, control region

- program region

- user data for the program

- control region

- kernel data for the program

- TLB

- the TLB is split in two (system/process), less has to be flushed on context switch

2009-10-08 Thu

VM pros and cons

-

pros

- larger address space

- convenience in segmentation and paging

- code portability

-

cons

- (time overhead) increased effective memory latency

- (space overhead) maintaining mappings, page tables

- increased complexity

2009-10-20 Tue

disks and file systems (see related 481 slides on Dorian's homepage)

disks

- disks

- stack of platter of concentric circles (or tracks) of sectors along with a movable arm (in all modern systems) and there is one arm/head per platter. each platter (aside from top and bottom) has data on both sides.

file system

semantics on top of disks

abstractions

- files

- directories

handles

- permissions

- mapping abstractions to disk

- enforcing resource quotas

directory

-

just a special file which consists of a list of entries

- directory entry contains: filename, id, inode-#

-

certain operations (

cd,ls) can only take place on directory files -

organizations (in increasing complexity)

- 1-level directory

- 2-level usernames/files

- trees (graph with no cycles)

-

acyclic graphs (sharing: multiple links to the same content)

- soft/symbolic link

- the file just maps to the name of another file (allows dangling pointers)

- hard link

- actually copies the inode-#, an inode (and the file) is removed when there are no more hard links pointing to the inode. this information is tracked in the inode

- general graphs

filesystem on disk:

- boot control block

-

volume control block

- # of blocks

- # free blocks (list)

-

directory structure

- starts @ root disk

- filenames, inode-#s

-

file table

- maps inode-#s to inodes

when a device is mounted the OS loads the filesystem structures into memory

filesystem in memory:

- mount table

- cache directory structure

-

open file table (another cache)

- variations: system wide or per process (know the pros and cons of each of these options)

- caching (pages/contents of the files)

2009-10-22 Thu

going through the midterm

Grade Distribution

| mean | med | max | max possible | |

|---|---|---|---|---|

| p1 | 23 | 25 | 30 | 30 |

| p2 | 11 | 12 | 14 | 15 |

| p3 | 19 | 20 | 25 | 25 |

| p4 | 8.7 | 10 | 15 | 15 |

| total | 61 | 61 | 84 | 85 |

Review of problems (in general on the exam less is more)

-

-

-

exokernel, library OSs are linked into the application space of the

application, so the

getpidcall is just a function call in the user-space which would not have to cross the user/kernel boundary, so this would be faster than the monolithic kernel -

-

protection, multiplexing, IPC

-

-

exokernel, library OSs are linked into the application space of the

application, so the

-

3) 1)

-

-

-

it is much more complicated to move a process than to move a

block of data. if you have many readers/writers of a block of

data it may make more sense to move the users to the data

rather than moving/replicating the data.

-

- in message passing structured IPC using copy-on-write can allow pointers to be passed from process->kernel->process rather than the actual block of data

-

it is much more complicated to move a process than to move a

block of data. if you have many readers/writers of a block of

data it may make more sense to move the users to the data

rather than moving/replicating the data.

-

2009-10-27 Tue

will discuss LFS and RAID on Thursdays

- LFS

- wanted to improve performance and ended up improving filesystem reliability

- RAID

- vise-versa

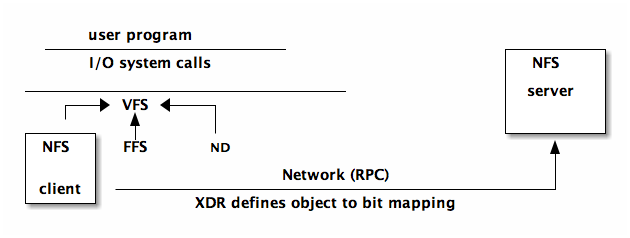

Network Files System (NFS)

remote file access

-

pros

- larger file servers (capacity)

- sharing

- robustness / redundancy

-

cons

- speed (latency)

- availability

- consistency

- complexity

-

NFS specific goals

- 80% speed of local disk

-

simple crash recovery

-

can repeat operations until success (idempotent). many

operations are not naturally idempotent, for example the read

operations

read(f, out, nbytes)would normally increment a counter in the file, in nfs this counter must be tracked on the client side and passed as a parameter to the server

-

can repeat operations until success (idempotent). many

operations are not naturally idempotent, for example the read

operations

- no state on the server

- transparent access

- preserve Unix semantics

-

Deployment Issues

- sharing the root file system

-

scalability/performance sharing heavy use files (e.g. binaries

required on startup)

- made these files local to each individual node

-

/tmpfiles (use the process ID, which wouldn't be unique across different nodes) -

/deventries of this directory have local semantics which make no sense to access on a remote system - authentication across machines (need a global system of user IDs, "Yellow Pages")

- concurrency: local locks but no global locks, so two users on different nodes could have their writes to a file interleaved.

-

performance: (solution is always caching)

-

calls which occur often, but transfer small bits of data,

(e.g.

getattrwhich is called byls, and pretty much every file access, this was initially 90% of the transactions) – so, they just cached attributes, this cache is invalidated every three seconds for files and thirty seconds for directories -

used

UDP(Unreliable Data Transport), so if a packet in a RPC is lost they'd just redo the RPC -

really big packets

- read-ahead to try to get blocks before their needed – this doesn't help for executables with random access patterns

-

calls which occur often, but transfer small bits of data,

(e.g.

- VFS (virtual file system) abstraction on top of the specific file system used. allows file systems to be plugged in sort of like device drivers

- XDR is used as a canonical data representation ensuring that when the client and server share objects (ints, arrays, etc…) they cache their objects out into bits in the same way (endianness, float representations, etc…)

2009-10-29 Thu

(if we are ever really interested in a paper we could lead that lecture)

disk failures

updates, 3 parts – related to disk failure

- (D) data blocks

- (F) free blocks

- (M) meta-data blocks

disk failure part way through a write could lead to incoherence in the three above. most FS will perform the above in such a way the any inconsistency is a "functional" inconsistency – while space may be wasted everything will still "work".

some crash cases

- (D) -> crash

- no real problem, just wasted time writing to a block that's still on the free list

- (D) -> (F) -> crash

- leaked a data block that will not be recovered

- (F) -> (M) -> crash

- functional problem, file points to whatever was previously on disk (garbage or someone else's old data)

fsck checks that

- all blocks not on free list are in use – referenced by an inode

- all blocks referenced by an inode are not in the free list

journal/log-structured differences

- journal – transactions in progress which can be used to recover from crash/failure

- log structured FS – actually uses the log as the only structure on disk

RAID / LFS

writes are buffered in main memory until there is a segments worth of data to write to disk. This allows the entire segment to be written w/o any seeks taking advantage of the disk's full bandwidth.

in RAID there is a slowdown factor of N when writing to N disks.

in LFS the checkpoints become the journal

RAID levels (5 and 1 are the only common levels)

- striping across a single disk

- straight mirrored disks, faster reads as you can read from both disks and whichever returns first wins (best case seek), for a write you have to wait for the write to complete on both disks (worst case seek)

- Hamming code for ECC

- Single check disk per group

- Independent read writes

- No single check disk (large performance increase over RAID level 4)

note know the basic read/write operations for each level and be able to discuss the performance implications

2009-11-03 Tue

LFS and RAID

- LFS

- main point is the caching setup. user <-> cache <-> disk

- RAID

-

don't need to know the names of the specific levels, but

should be able to derive the mechanisms for reading/writing, as

well as the implications speed/reliability for these mechanisms.

RAID can be implemented in hardware or software. Be able to

extend these concepts (e.g. RAID 7 is )

- 0

- block-level striping

- 1

-

simple mirrored disks

- read: could use either disk (faster), for a multi-block read each disk could serve up different blocks

- write: will necessarily use both disks

- 5

-

block-level striping and distributed parity – parity is

spread across all disks

- read: will either only touch the specific disk which the block lives on, or will read all disks (including parity) and will reconstruct the data

- write: must touch all disks, writes to the disk on which the data will live and to the parity disk and reads from the other disks to calculate the parity

blocks and sectors

- block

- software construct, typically will be equal in size to either a single sector or multiple sectors

- sector

- the actual size sections of the physical disk

CODA

- call backs are used in asynchronous operations, they alleviate the need for active probing. allows the server to alert the client when a change occurs – used in CODA for cache coherence

2009-11-05 Thu

general consistency

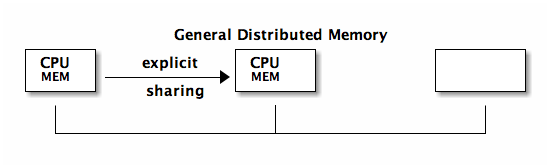

by and large message passing has beat out shared memory when it comes to distributed computing. MPI is the de-facto distributed memory standard openMP is a new message passing alternative.

typically there is no global clock

- strong consistency

- (called sequential consistency in Munin paper) any write is immediately visible to subsequent reads

- causal ordering

- uses communication between processes to determine a global partial ordering

- weak consistency

- this is not really ever used. makes no guarantees that writes will be visible to future reads

- eventual consistency

- write will eventually be seen

- release consistency

- requires data to be visible only at certain synchronization points (i.e. at release or barrier)

Munin

Munin – shared program variables are annotated with their access pattern which is used by the OS

- barrier

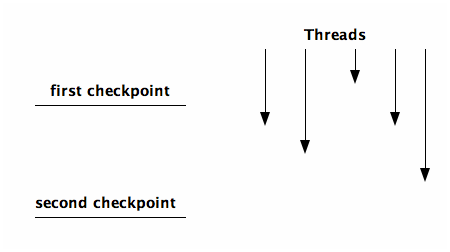

- designate a point where you will wait at that point until every other thread gets to that point

- split-phase barrier

-

two checkpoints, everyone can pass the first

checkpoint arbitrarily, but no-one passes the second checkpoint

until everyone has passed the first

Munin Annotations and Protocol Parameters

| annotations | I | R | D | FO | M | S | FI | W |

|---|---|---|---|---|---|---|---|---|

| read-only | N | Y | N | |||||

| migratory | Y | N | N | N | N | Y | ||

| write-shared | N | Y | Y | N | Y | N | N | Y |

| producer-consumer | N | Y | Y | N | N | Y | N | Y |

| reduction | N | Y | N | Y | N | N | Y | |

| result | N | Y | Y | Y | Y | Y | Y | |

| conventional | Y | Y | N | N | N | N | Y |

Meanings of Parameters

| I | invalidate or update |

| R | replicas allowed? |

| D | delay vs. immediate |

| FO | fixed owner? |

| M | multiple writers allowed? |

| S | stable sharing pattern? |

| FI | flush changes to owner |

| W | writable? |

Non-functional performance enhancing objects

- ability to map an object to a lock

- ability to explicitly flush changes to an object

Implementation

- maintained a hash table mapping object addresses to their attributes

- copyset was a list of where (which processors) an object currently exists

- delayed update queue (DUQ) to hold updates which will need to be propagated, generally held until barrier and then sent to everyone in it's copyset

question: why only use twins when there are multiple writers?

2009-11-10 Tue

Munin implementation

- DHT or Distributed Object Directory

-

delayed update queue

- page twins: two copies of a page used to find out what the differences are between old/new versions of the page

- distributed locks were effectively a queue, person at the front owns the lock and everyone else is further down the line.

page faults used to track updates

- write protect pages that process would normally be able to write to

- when page faults allow write to go through but make a note and maybe update remote copies of the page

Quicksilver

- transaction

-

collection of operations into a single atomic unit of

consistency and recovery. techniques include…

- locks

- mutexes

- semaphores

- monitors

- h/w instructions

- interruptible disabling

- commit protocols

-

some things to be considered as goals

- atomicity

- recovery semantics

-

minimize overhead

- blocking/sync

- logging overhead

- communication

- two phase commit

-

coordinator and subordinates

transaction_begin 1 2 3 ... transaction_end

-

the coordinator

- initiates the transaction

-

preparemessage is sent to all subordinates - subordinates act and respond

-

send

commit

-

the subordinate

-

upon receipt of

preparemessage the subordinates reply with eitheryesorno -

no-> veto -

or go to

preparedstate and update logs and respondyes

-

upon receipt of

-

the coordinator

2009-11-12 Thu

Quicksilver

locks used to make a monolithic unit out of a series of operations

- short lock

- would only be held for a single operation inside of a transaction

- long lock

-

could be held for an entire transaction

short locks long locks read write - degree 0 consistency

-

short write lock and no read lock

- cascading abort

- dirty reads

- non-repeatable reads

- degree 1 consistency

-

long write lock and no read lock

- dirty reads

- non-repeatable reads

- degree 2 consistency

-

long write lock, and short read lock

- non-repeatable reads

- degree 3 consistency

- long write lock, and long read lock

locks in the context of their DFS (Distributed File System)

-

directories

- locks for renaming, creating, deleting

- write lock for dir.entries

- no read locks

-

files

- short read locks and long write locks

highlights (distinguishing features)

distributed OS using transactions for data consistency

wrapped applications in trivial transactions, so bad quit would remove all previous changes

in order to share a transaction with another process you would need to fork that process

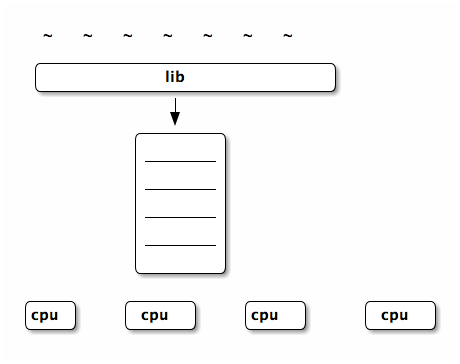

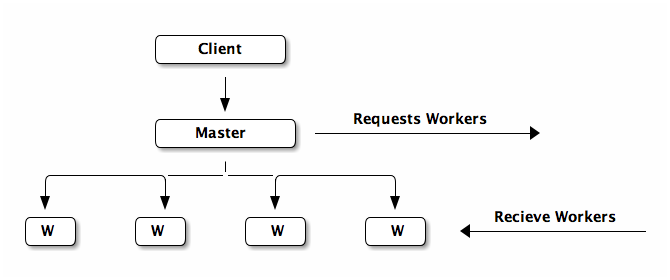

Cluster Based Scalable Network Services

advantages

- small unit of fault -> robust

- scalable

- cost effective

BASE

- Basically Available

- Soft state

- Eventual consistency

Condore is another system that finds idle machines and sends them work when work accrues

implementation

components of the system

-

front end

- http server

- thread pool

-

workers

- to provide services

- to hold the results of computation

- report failed services to the manager

-

manager

- calculates load and sends requests to the front-end

- receives failure reports from workers

failure peers vs. failure pairs

- failure peers

- manager watches front-end and restarts if it crashes and vice versa

- failure pairs

- more generally called hot backups where each component has a backup which can take over if one fails

2009-12-01 Tue

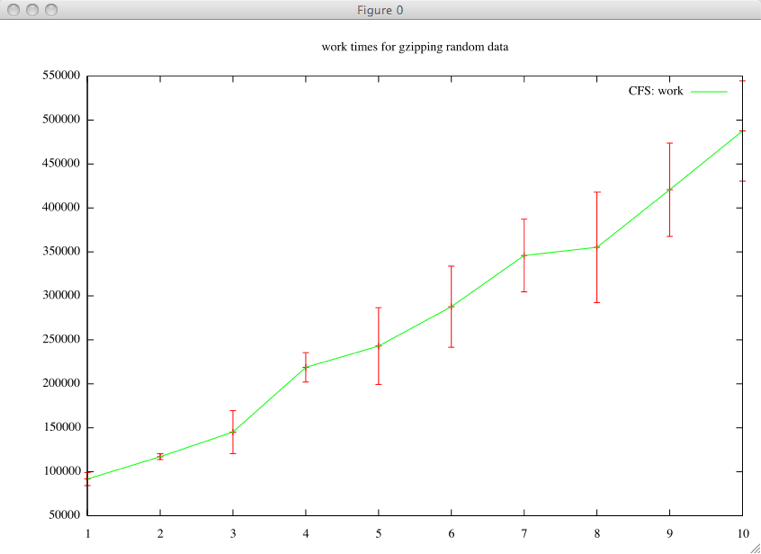

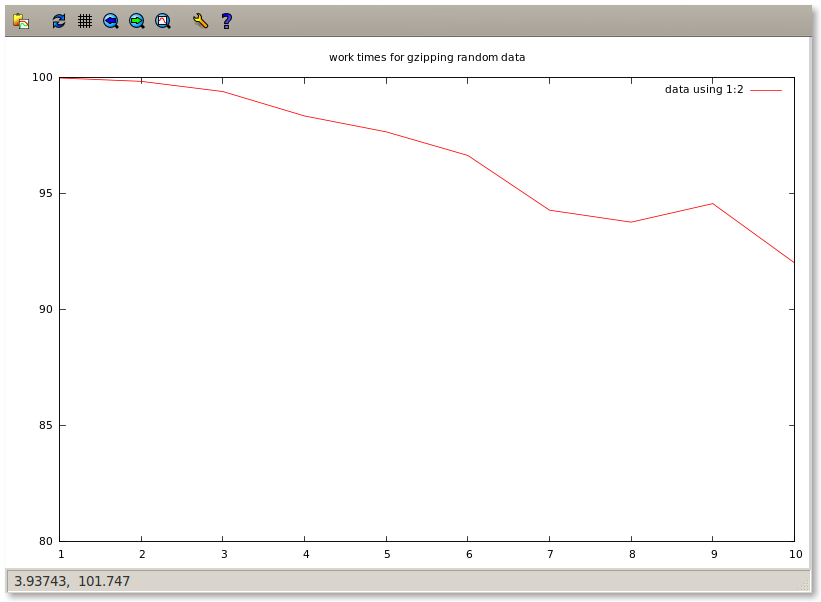

cover CFS and do Map-Reduce on Thursday, presentations starting next week

final

- lets try to do a final-review outside of class

- final will sprinkle questions over the first half, but will focus on the second half

project

- paper is due at the end of next week

- 10-12 minutes per group – 8-10 slides

CFS

- lookup

- (finger table and successor list) the successor list was slow because on average you would have to touch half the servers in the system, so the finger table was added to store IDs of far away people for quick jumps to distant portions of the circle.

- caching & timeout

2009-12-03 Thu

map reduce

-

stream programming collection of filters which the data passes

through

+---+ | F | +---+ /- -\ / -\ /- -\ +-+ +---+ |F| | F | +-+ +---+ -\ /-- -\ /- +---+ | F | /+---+\ /-- ---\ +-+ +---+ |F| | F | +-+\ /+---+ \ / \ / \ / +---+ | F | +---+

- consistency

- can handle failures in workers (just aborts if master happens to fail) by repeating the computation for failed workers. this mains that the worker tasks can happen multiple times – so they must be idempotent (i.e. side effect free). also the computation would need to be deterministic for re-doing of failed nodes to have no effect.

- backup tasks

- only as fast as your slowest worker – so as workers finish the unfinished tasks are duplicated to idle workers in the hopes that someone new will finish the task earlier

- combiner function

- can be run on the local map worker to compact the data before it is sent of to be reduced

- skipping bad records

- when some records continually cause workers to fail then they will be skipped

- local execution

- ideally workers will be selected which are close to the data which they will be analyzing

reading notes

Map / Reduce

Amoeba vs. Sprite

both truly distributed operating systems in contrast to most of today's large distributed system which has node-local OSs with a global managing agent.

Network Services

QuickSilver

Munin DSM

CODA

RAID

NFS network file system

log file system

fast file system

Old FS: (order on disk)

- superblock

- inode blocks: direct (first 8 blocks) v.s. indirect blocks

- data blocks: size (initially 512 then up to 1024)

issues with this setup

- inodes not located near the data, so many non-contiguous jumps

- issues with fragmentation

- didn't take advantage of the structure of the disk (too much random access of the file)

New FS:

- collocated inode and file data (in the same cylinder group)

- replicate the superblock information across all cylinder groups (reliability)

-

variable block sizes (4k block size has average 2k internal fragmentation)

- split each block into anywhere from 1-8 fragments (powers of two) and managed free space on a fragment (rather than block basis). this can incur bookkeeping and overhead problems (as a file increases in size it may need to be continually copied between fragments and blocks).

- exploit h/w characteristics by trying to adjusting notion of "contiguous" based on the speed with which the disk can move between segments

- collocate directories and files

VM in Multics

goals

- provide the user with a large virtual memory hiding moving of data between levels, and any machine-dependent stuffs

- allow procedures to be called by name w/o any need to plan for the storage of the called procedure

- permit sharing of procedures and data among users subject only to permission restraints (vital to efficient operation in a multiplexed system)

process, address space

processes and address space stand in a one-to-one correspondence

address space is composed of variable length segments, each segment is either data or procedure which affects it's access permissions.

segments are addressed using a directory structure similar to files.

addressing

- generalized address

- consists of a segment number and a word number

- address formation

-

based on values of processor registers,

different for process/data segments

- process

- segment number in procedure based register + the program counter

- data

- the segment tag of instruction selects a base register if the external flag is on. otherwise the segment number is taken from the base register

- indirect addressing

- in this case the generalized address is used to fetch two 36-bit words, these are combined to form another generalized address. can be nested

- descriptor segment

- generalized-address -> main-memory is done using a two-step hardware lookup

- paging

- of segments allows non-contiguous segments of main memory to be referenced as logically contiguous generalized addresses

intersegment linking and addressing

shared access and building upon others addresses are both important goals of multiplexed machines

requirements

- pure procedure segments execution can't change their content

- symbolic procedure calls without making prior arrangement for the procedure's use

- segments of procedure invariant to recompilation of other segments

implementation

- making a segment known

- when the segment is called by symbolic name it is added to the caller's description segment and can later be referenced by number

- linkage data

- a processes code must be invariant to compilation, so the process will always use a segments name/path to address it. after the segment is known, then it's number can be used. a linkage segment will hold the information on name/path -> number transformations so that the numbers can be used for known segments w/o changing the contents of the process

VM in Vax

process & virtual address space

page number and offset within the page

address space divided into spaces (not segments)

- system space

- high-address half is system space and is shared across all processes. This contains OS stuff, executive code and protected data.

- process space

-

low-address half (for the process)

- program region (P0)

- low-address half of process space. contains the user's executable program. first page is reserved to cause errors on 0-address references

- control region (P1)

- high-address half of process space. this region is used to hold process-specific data

each space/region has it's own page table

- system space page table

- in hardware, not swapped on context switch

- process tables

- in the system-space, are swapped on context switch

memory management

paging issues

- effect of heavy pagers on other processes

- high cost of startup/restart (by faulting it's way into main memory)

- increased disk workload of paging

- processor time searching page lists

pager and swapper

- pager

- OS procedure resulting from page fault

- swapper

- separate process which moves pages into/out-of memory

dealing with the above issues

- the pager deals with this issue by evicting pages from the process which is requesting the new page, so one process won't push out everyone else's pages. also a limit is placed on the number of pages a process can have in memory.

- the above helps with this as well

- the VAX clusters the reading and writing of pages to relieve I/O burden on the disk

- by not having a reference bit (used to mark recently used pages) the VAX system takes load (scanning page tables and setting these bits) off of the processor

when pages are removed they are placed on the free page list or the modified page list depending on their modified bit and whether they need be written to memory. these lists serve as physical caches for recently removed pages (it is quick to move a page from one of these lists back to the working memory).

by caching the modified pages in the modified page list the following for speedups are gained.

- caches pages for quick return to the process

- clustered writes (~100 pages on the development system)

- arranged on paging file so clustering read is possible

- many page writes are avoided entirely

additional structures

- demand zero

- when processes require new pages they are created and filled with zeros on demand

- copy on reference

- when multiple processes using a page

program control of memory

for real-time programs that need explicit memory control

- expand it's P0 or P1

- increase it's resident set size

- lock (or unlock) pages in it's resident set

- create/map sections into it's address space

- record it's page-fault activity

Scheduler activations

introduction

user threads vs. kernel threads

- user threads

-

- requires no kernel intervention

- fast (on order of procedure call)

- flexible

- each thread runs on a "virtual processor" which still has to be multiplexed onto a real processor and interleaved with system calls, and kernel stuff leading to a performance hit

- sometimes exhibit incorrect performance when involve I/O

- kernel threads

-

- directly maps each application thread to a physical processor

- heavy weight

- not a restricted (RE: side effects, I/O)

the goal of this paper is to combine user/kernel threads

- common case (no kernel required) perform as user threads

- acts as kernel threads when needs to talk to kernel

- easily customizable

- difficulty is that relevant information is scattered between kernel space and user address space

the approach described in this paper is to give each user-level thread system with it's own virtualized machine which can have any number of processors.

problems w/user threads over kernel threads

- kernel threads must implement anything that any reasonable user-level thread system may need (too much overhead)

- when a user-level thread blocks (for I/O, fault, etc…) it's kernel thread also blocks

- if we create more kernel threads then there are processors then the OS must make scheduling decisions without any information about the priority / current-task / importance of the related user-level threads

design (scheduler activations)

each user-level thread system gets it own virtual multiprocessor

- kernel gives processors to user thread systems

- user thread system has complete control over use of it's virtual multiprocessor

- user thread system can tell kernel when it needs more threads

- user thread system only talks to kernel when it needs to

- looks to the application programmer like they are using kernel threads

-

communication from the kernel to the

user-level thread system which may cause it to reconsider it's

scheduling decisions.

-

roles

- serves as the vessel or context of the user-level thread

- notifies user-level thread of kernel event

- stores user-level thread when it's blocked (e.g. for I/O)

-

when a thread is stopped

- the kernel stuffs it into it's activation

- creates a new activation to tell the thread system that the thread has been stopped

- the thread system removes the thread, and tells the kernel the activation can be re-used

- the kernel does another upcall giving the newly released scheduler activation (processor) to the thread system to run a new thread on

- there are all ways as many activations assigned to an address space as there are actual processors

- in the same manner processors are moved from one address space (thread system) to another

-

roles

-

how user-level thread systems keep the kernel

informed about their amount of parallelism

- inform kernel when more threads than processors

- inform kernel when more processors than threads

-

when a thread is interrupted while in a

critical section

- the kernel makes an upcall informing the address space that the threads processor is ready

- this upcall is intercepted and given to the thread until it is out of it's critical section

- the thread is then put back on the ready queue and the address space is free to respond to the new processor however it sees fit

implementation

implemented by tweaking

- Topaz

- the native kernel threads for the firefly machine

- FastThreads

- a user-level thread package

performance

- same order of magnitude as plain user-threads

-

upcall performance is slow, much slower than normal kernel thread

operations

- written on top of existing kernel thread library (not from scratch)

- written in higher level language (not carefully tuned assembly)

-

N-body problem

-

speedup with more processors

- some increase over fast-threads

- significant increase over kernel threads

- more robust than fast-threads to lower amounts of memory

-

speedup with more processors

related ideas

psyche and symunix are both NUMA OSs which provide virtual processors similar to activation contexts.

differences

- both psyche and sumunix provide for shared address space between kernel and thread systems

- neither provides the exact functionality of kernel threads (for I/O etc…)

- neither provides efficient system for user-level thread system to notify kernel when it's hungry

summary

combine the performance of user-level threads with the functionality of kernel-level threads. this is done by supplying each user-level threading system with a virtual multiprocessor in which the application knows exactly how many processors it has at any one time (and each processor maps to an actual physical processor)

- processor allocation (between applications) is done by the kernel

- thread scheduling is done by address space

-

kernel notifies address space of events affecting it

- new processor

- less processor

- preempted thread

- address space notifies the kernel if it needs more/less processors

Monitors (2)

Monitors: An OS structuring concept

-

monitors are procedures or functions called by software wishing to

acquire a resources along with local administrative data

monitorname: monitor begin.. declarations of data local to the monitor; procedure procname (... formal parameters...) ; begin... procedure body... end; ... declarations of other procedures local to the monitor; ... initialization of local data of the monitor... end;

-

a procedure will have to

waitwhen the monitor is in use - when the program is waiting for the monitor, it needs to be sure that after the monitor is released, the very next procedure to execute will belong to itself

-

there are multiple reasons that a program will need to

wait, so the program will have to set aconditionvariable to indicate that it is waiting for the monitor

example of a monitor (resource:monitor) with condition variable nonbusy

single resource:monitor begin busy: Boolean; nonbusy : condition; procedure acquire; begin if busy then nonbusy.wait; busy : = true end; procedure release; begin busy := false; nonbusy.signal end; busy : = false; comment initial value; end single resource

the above example simulates a boolean semaphore with aquire and

release procedures.

interpretation

a process inside a monitor may need to signal another process. the

signaler must wait for the signaled to complete and to allow it to

proceed, it can increment an urgentcounter to indicate that it had

control of the monitor and should get it back.

then whenever the monitor is released, the urgentcounter should be

decremented and the longest waiting process on the counter restarted.

similarly we need to be able to allow process in monitors to wait as

well as signal which could be implemented similarly (with a

waitcounter)

given the above the monitor can be explicitly passed form one process to another, and only released when there are no more processes in the explicit passing of control

bounded buffer example

two processes running in parallel share a bounded buffer, one is the consumer (eating form the beginning) and one the producer (appending to the end).

the following implements this setup

bounded buffer:monitor begin buffer:array 0..N - 1 of portion; lastpointer:0..N - 1; count:0...N; nonempty,nonfull:condition; procedure append(x:portion); begin if count = N then nonfull.wait; note 0 <= count < N; buffer[lastpointer] := x; lastpointer := lastpointer + 1; count := count + 1; nonempty.signal end append; procedure remove(result x :portion); begin if count == 0 then nonempty.wait; note 0 < count <= N; x := buffer[lastpointer - count]; nonfull.signal end remove; count := 0; lastpointer := 0; end bounded buffer;

scheduled waits

sometimes rather than just selecting the longest waiting process from a variable we would prefer to allow processes to have some priority

real world examples

- buffer allocation

- disk head scheduling elevator algorithm

- readers and writers (only writers need exclusive access)

- to ensure writers can access elements, no readers can start while a writer is waiting

- to ensure readers get access, all readers queued during a write are allowed to read before the next write operation begins

-

variables

-

startread -

endread -

startwrite -

endwrite - number of waiting readers

- is someone writing

-

conclusion

monitors can be an appropriate structure for an OS with parallel users

Experience with Processes and Monitors in Mesa

Lampson and his team seem to make everything harder than it should be

issues

- programming structure

- must fit monitors into Mesa's module based organization

- creating processes

- need to be able to dynamically create processes after compile time (adds complications)

- creating monitors

- need to be able to dynamically create monitors after compile time (adds complications)

waitin nested monitor call- is confusing

- exceptions

-

make Mesa's

unwindfunctionality work well with monitors - scheduling

- moving from recommendations to implementation proved difficult

- input/output

- again moving from theory to practice can be hairy

description

(see mesa-monitors)

implementation

equal division between

- runtime

- implements the heavier rarely used stuff like process creation deletion

- compiler

- implements the various syntactic constructs and translated into built-in support procedures

- hardware

- directly implements the more heavily used stuff like scheduling and entry/exit

performance

| Construct | Time (ticks) |

|---|---|

| simple instruction | 1 |

| call + return | 30 |

| monitor call + return | 50 |

| process switch | 60 |

| WAIT | 15 |

| NOTIFY, no one waiting | 4 |

| NOTIFY, process waiting | 9 |

| FORK+JOIN | 1,100 |

conclusion

integration of monitors into Mesa was harder than anticipated given the amount of literature on monitors and the high level of Mesa, however, much work was done to implement monitors in such a way that they can be used as the sole concurrency construct for an entire OS/language.

questions

- wouldn't it also be a problem if I'm in my protected block, and hardware barges in and takes over the resource (breaks the monitor invariant)

Virtualization

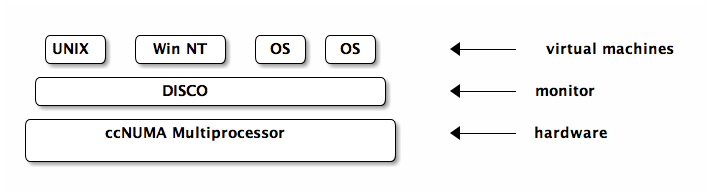

Commodity Operating Systems on Scalable Multiprocessors

comodity-os-on-multiprocessors.pdf

again cites the size and complexity of modern operating systems as limiting factor, this time in effectively utilizing massively multiprocessor machines.

rather than customize the OS this paper inserts a small virtual machine monitor between the OS and the hardware.

Demonstrated on the Stanford FLASH shared memory multiprocessor, with an experiments cache coherent non-uniform memory architecture or ccNUMA setup.

problem

hardware development moves very quickly, yet people like to bring all of their existing software (which is OS dependent) to this new hardware.

there is a need for quickly porting existing OSs to new hardware as this is the limiting factor in adoption of new hardware setups

virtual machine monitors

the virtual machine monitors serves as a thin layer between the hardware and existing comodity OSs (like windows NT or *NIX), exporting to each OS a set of virtualized resources which it is able to manage.

while the machine can communicate through standard external interfaces (NFS, TCP/IP), the monitor is able to efficiently assign resources across machines (i.e. one machine may get more memory if needed, etc…)

with small changes the OSs can explicitly take advantage of the shared memory between virtual systems (e.g. a database could put it's buffer cache in shared memory supporting multiple query servers)

the VM takes many burdens off of the OS

- only the VM need scale to the size of the hardware

- the VM can isolate separate OSs protecting from faults

- NUMA memory management

- in general handling hardware quirks

- VM issues

- overhead

-

-

additional

- exception processing

- instruction execution

- memory requirements

- large structure duplicated for each OS (file system buffers)

-

additional

- resource management

- the VM does not have high level information about the processing taking place, so it can't distinguish processing which is just the OSs busy loop from important calculations.

- communication

-

looks like different OSs on the same hardware

rather than each OS on it's own hardware, so

- same file can't be open in two different VMs

- same user can't start multiple VMs

DISCO (a virtual machine monitor)

DISCO is designed for the FLASH multiprocessor which consists of a collection of nodes arrayed on a high speed interconnect. each node contains a CPU, memory, and IO devices

Disco Interface

- processors

- exports a processor of the same type as those used by FLASH. OSs tuned to use disco can directly access some common processor functionality using special load/store instructions.

- physical memory

- exports continuous physical memory starting at 0, and handles all the NUMA stuff behind the scenes

- I/O devices

-

provides each OS with the illusion of their own I/O

devices. this means disco must intercept all I/O communication.

again provides special instructions for disco-aware OSs to bypass

this in special cases

- DISCO provides a virtual subnetwork which the machines can use to communicate amongst themselves

DISCO implementation

general

- as a multi-threaded shared memory program

- the small code portion of DISCO is duplicated across processors so page-misses are all local

- avoids linked-lists and other structures which perform poorly with caching

virtual CPU

- for speed DISCO direct executes most instructions and only tries to intercept dangerous instructions (like TLB modifications)

- runs in supervisor mode which is between kernel and user mode

- monitor catches traps and simulates them to the VM

virtual memory

- maintains machine-to-physical mapping

- catches VM attempts to update the TLB and uses them to update it's own TLB

-

downsides which decrease performances

- TLB used for OS code/memory

- TLB flushed between CPU switches

memory management

-

tries to be smart

- copies pages to the nodes where they are most used

- duplicates read-heavy pages between nodes that use them

- uses FLASH hardware support for counting cache misses per page and identifying hot pages

I/O devices

- intercepts all devices access

- add special DISCO device drivers into the OS

- DMA map (translates physical to virtual address spaces?)

copy-on-write disks

- multiple VMs can share pages in virtual memory

- copy-on-write means that this is transparent to the machines

- copy-on-write only makes sense for writes which will not be permanent or shared between machines

- user files and persistent disks DISCO only allows one VM to mount the disk at a time (or using distributes file system protocol like NFS)

DISCO (commodity OS)

currently supports a version of UNIX (IRIX), most changes to the OS resided in the HAL (hardware abstraction layer)

the special load/store call mentioned earlier to avoid traps are implemented in the HAL

experimentation

all takes place on SimOS a machine simulator

conclusion

developing system software for shared-memory multiprocessors, and more generally for new hardware.

DISCO shows that many of the performance limitations of VM setups are no longer an issue (sort of).

although software and OSs are growing in complexity the hardware-interface has remained relatively simple. supporting new hardware through a thin VM monitor such as disco is simpler and easier then rewriting the OS.

question

- DMA

- what is it?

Xen and the Art of Virtualization

Exokernel

don't hide power!

Allows untrusted user-level applications to have direct access to system hardware. They present ExOS, an operating system implemented entirely in user-space libraries.

does this by securely multiplexing hardware resources between untrusted software

many programs have specialized behavior and their performance is severely hampered by being forced into using general OS abstractions to access hardware

library OS

- libraries implementing some part of the OS can be app specific

- libraries can trust the application (the exokernel will errors from hurting other applications)

- less OS-app transitions since much of the OS (the library) is in the application's address space

exokernel requirements

- track ownership of resources

- performing access control (guarding usage or binding points)

- revoking access to resources

revocation

most OSs have invisible revocation of resources, so that application doesn't know when for example physical memory is being allocated or deallocated.

exokernels have visible revocation, so that applications can have some say in their allocation, and know when resources are scarce. even when the processor is taken at the end of a time-slice the application is notified.

this is necessary when the applications are using physical names to refer to resources, they must be notified upon revocation because their names will have to change

sometimes it's nice to allow "good faith" operations to take place before revocation of a resource

other times the exokernel will abort a misbehaving application

implementations

- Aegis

- exokernel

- ExOS

- Library OS

Aegis

- process environments

-

store the information needed to deliver

events associated with a resource to it's owner

- exception

- interrupt

- protected entry

- addressing

exceptions

transfers all exceptions to the application except system calls and interrupts

exception handling…

- saves three "scratch" registers into an agreed upon place

- loads the exception program counter, last non-valid virtual page address, and cause of exception

- uses exception cause to jump to pre-specified application program counter where processing resumes

features

- very fast

- very simple (because does not have to differentiate between TLB exceptions and all others)

address translation (application level virtual memory)

TODO

summary

an exokernel eliminates high level abstractions and focuses purely on securely multiplexing the hardware. a library OS can be build very efficiently upon an exokernel providing many of the standard OS features in a fast and extensible manner.

by allowing applications direct access to hardware it is possible for applications to greatly speed up their performance as compared to a traditional OS.

by implementing the majority of the OS as application libraries it is trivial to extend or tailor major components of the OS.

the only downside seems to be that the application has much more to worry about if it wants to take advantage of the potential speedup.

µ-kernels

performance-of-µ-kernel-based-systems

This paper aims to show that µ-kernel systems

- can run modern OS personalities

- can perform in the same range as normal monolithic kernels

- that extensions to µ-kernel based systems can be implemented efficiently in user space

- supports four basic processes; address-spaces, threads, scheduling, and synchronous inter-process communication

intro

- a µ-kernel only provides address space, threads, and IPC

-

many people think that µ-kernels are either

- too low

- and these people try to add safeguards, or abstractions for helping extensions

- too high

- and these people try to make µ-kernel interfaces look like hardware interfaces

-

first generation µ-kernels like Chorus and Mach

- evolved from monolithic kernels

-

second generation µ-kernels like QNX and L4

- designed form scratch

- more rigorous in pursuit of minimalist design

-

experiments

-

linux adapted to run on L4

- gives upper performance bound

- compare L4Linux to a linux adapted to the Mach kernel

- insight to µ-kernel functions that affect linux performance

- implemented pipes on top of µ-kernel and compared to native unix pipes

- implemented mapping-related OS extensions

- implemented first part of real time user-level memory management system

- moved the L4 to a new processor

- lower-level communication primitive

-

linux adapted to run on L4

related work

L4 essentials

based on two basic concepts, threads and address spaces

- thread

- activity executing inside of an address space

- IPC

- cross address-space communication is a fundamental µ-kernel mechanism

the initial address space represents physical memory, additional address spaces are constructed by granting, mapping, and unmapping flex-pages of sizes 2n. the owner of an address space can grant map and unmap it's pages to/from other address spaces. these user-level pagers handle all address space construction and maintenance.

- note

- mapping and unmapping pages is like creating and deleting pages. mapped to physical memory or not

when there is a page-fault it is IPC'd by the µ-kernel to the pager associated with the faulting thread. the pager and thread have complete control as to how to handle the fault allowing many options for memory management

I/O ports are handled as address spaces, with device interrupts handled as IPC

exceptions and traps are synchronous to the executing thread, they are mirrored up to user-level

linux on L4

as linux now runs on multiple architectures there is a fairly well-defined interface between architecture dependent and independent sections

-

architecture-defendant section

- interrupt service routine

- low-level device driver support

- user process interaction

- context switching

- copyin/copyout data between kernel and user spaces

- signaling

- mapping/unmapping of address spaces

- system-call mechanism

- linux uses a 3-level architecture independent page-table scheme

L4-linux design/implementation

- fully binary compliant

µ-kernel tasks are used for user processes and provide linux services via a single linux server in a separate µ-kernel task.

- the linux server

- linux kernel's address space maps 1-1 to the underlying pager

Unix Time Sharing System

wish I had read this to learn Unix/Posix systems

-

perhaps the most important achievement is demonstration of cheap

- $40,000 in hardware

- 2 man-years in development

- UNIX takes ~50K of ~144K of memory on the computer

- originally implemented largely in byte code, now all in C

File System

- ordinary files

- directories

- special files

types of files

- ordinary files: can hold any content, the file system places no limits

-

directories: fairly elegant specification of directories, each is a

file holding the names of the files it contains, there is a root

directory, there is normally a current directory, etc…

-

/is the "root" directory, which holds a path to all files -

there are links (a file can live in multiple directories)

- all links are equal (it doesn't actually live in any one) although in practice a file is made to disappear along with it's last link.

-

.and..are special

-

-

special files: each I/O device is associated with a special file

through which reading/writing to the I/O device occurs

- file/device I/O are as similar as possible

- file/device names have the same syntax and meaning

- same protection mechanism

- mount: system call which takes the name of an existing ordinary file, and the name of a special file which points to a device which has the structure of an independent file system. mount then replaces the existing file with the root of the independent file system. mounted file systems are identical to regular file systems with the single caveat that no links can exist between separate file systems.

protection

- uid: each user assigned a unique id