February 13, 2011

Proximate vs. Ultimate Causes

Jon Wilkins, a former colleague of mine at the Santa Fe Institute, has discovered a new talent: writing web comics. [1] Using the clever comic-strip generator framework provided by Strip Generator, he's begun producing comics that cleverly explain important ideas in evolutionary biology. In his latest comic, Jon explains the difference between proximate and ultimate causes, a distinction the US media seems unaware of, as evidenced by their irritating fawning over the role of social media like Twitter, Facebook, etc. in the recent popular uprisings in the Middle East. Please keep them coming, Jon.

-----

[1] Jon has several other talents worth mentioning: he's an evolutionary biologist, an award-winning poet, a consumer of macroeconomic quantities of coffee as well as a genuinely nice guy.

posted February 13, 2011 04:58 PM in Scientifically Speaking | permalink | Comments (0)

February 02, 2011

Whither computational paleobiology?

This week I'm in Washington DC, hanging out at the Smithsonian (aka the National Museum of Natural History) with paleontologists, paleobiologists, paleobotanists, palaeoentomologist, geochronographers, geochemists, macrostratigraphers and other types of rock scientists. The meeting is an NSF-sponsored workshop on the Deep Time Earth-Life Observatory Network (DETELON) project, which is a community effort to persuade NSF to fund large-ish interdisciplinary groups of scientists exploring questions about earth-life system dynamics and processes using data drawn from deep time (i.e., the fossil record).

One of the motivations here is the possibility to draw many different skill sets and data sources together in a synergistic way to shed new light on fundamental questions about how the biosphere interacts with (i.e., drives and is driven by) geological processes and how it works at the large scale, potentially in ways that might be relevant to understanding the changing biosphere today. My role in all this is to represent the potential of mathematical and computational modeling, especially of biotic processes.

I like this idea. Paleobiology is a wonderfully fascinating field, not just because it involves studying fossils (and dinosaurs; who doesn't love dinosaurs?), but also because it's a field rich with interesting puzzles. Surprisingly, the fossil record, or rather, the geological record (which includes things that are not strictly fossils), is incredibly rich, and the paleo folks have become very sophisticated in extracting information from it. Like many other sciences, they're now experiencing a data glut, brought on by the combination of several hundred years of hard work, a large community of data collectors and museums, along with computers and other modern technologies that make extracting, measuring and storing the data easier to do at scale. And, they're building large, central data repositories (for instance, this one and this one), which span the entire globe and all of time. What's lacking in many ways is the set of tools that can allow the field to automatically extract knowledge and build models around these big data bases in novel ways.

Enter "computational paleobiology", which draws on the tools and methods of computer science (and statistics and machine learning and physics) and the questions of paleobiology, ecology, macroevolution, etc. At this point, there aren't many people who would call themselves a computational paleobiologist (or computational paleo-anything), which is unfortunate. But, if you think evolution and fossils are cool, if you like data with interesting stories, if you like developing clever algorithms for hard inference problems or if you like developing mathematical or computational models for complex systems, if you like having an impact on real scientific questions, and if you like a wide-open field, then I think this might be the field for you.

posted February 2, 2011 08:26 PM in Interdisciplinarity | permalink | Comments (6)

November 17, 2009

How big is a whale?

One thing I've been working on recently is a project about whale evolution [1]. Yes, whales, those massive and inscrutable aquatic mammals that are apparently the key to saving the world [2]. They've also been called the poster child of macroevolution, which is why I'm interested in them, due to their being so incredibly different from their closest living cousins, who still have four legs and nostrils on the front of their face.

Part of this project requires understanding something about how whale size (mass) and shape (length) are related. This is because in some cases, it's possible to get a notion of how long a whale is (for example, a long dead one buried in Miocene sediments), but it's generally very hard to estimate how heavy it is. [3]

This goes back to an old question in animal morphology, which is whether size and shape are related geometrically or elastically. That is, if I were to double the mass of an animal, would it change its body shape in all directions at once (geometric) or mainly in one direction (elastic)? For some species, like snakes and cloven-hoofed animals (like cows), change is mostly elastic; they mainly get longer (snakes) or wider (bovids, and, some would argue, humans) as they get bigger.

About a decade ago, Marina Silva [4], building on earlier work [5], tackled this question quantitatively for about 30% of all mammal species and, unsurprisingly I think, showed that mammals tend grow geometrically as they change size. In short, yes, mammals are generally spheroids, and L = (const.) x M^(1/3). This model is supposed to be even better for whales: because they're basically neutrally buoyant in water, gravity plays very little role in constraining their shape, and thus there's less reason for them to deviate from the geometric model [6].

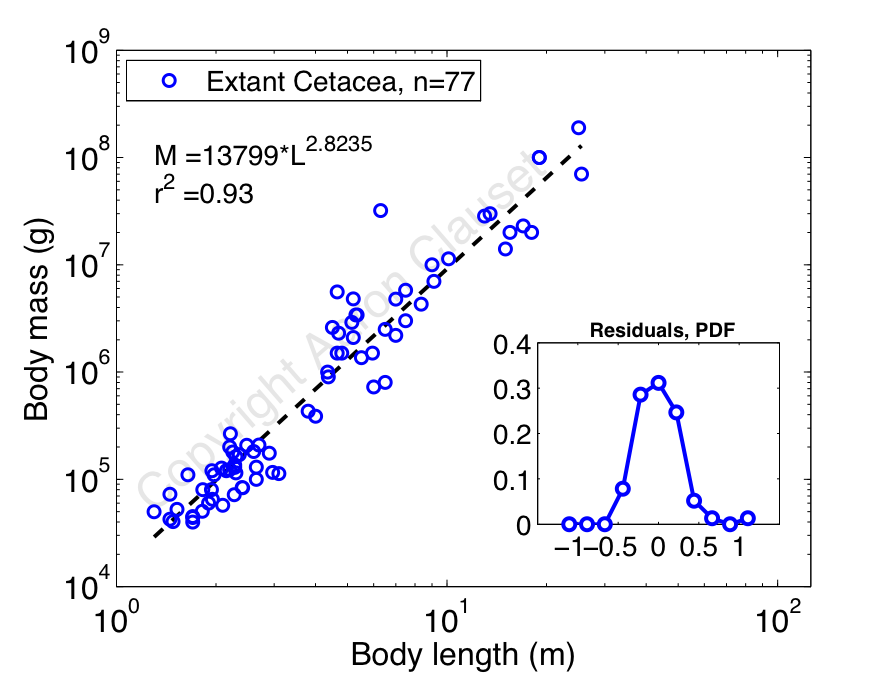

Collecting data from primary sources on the length and mass of living whale species, I decided to reproduce Silva's analysis [7]. In this case, I'm using about 2.5 times as much data as Silva had (n=31 species versus n=77 species), so presumably my results are more accurate. Here's a plot of log mass versus log length, which shows a pretty nice allometric scaling relationship between mass (in grams) and length (in meters):

Aside from the fact that mass and length relate very closely, the most interesting thing here is that the estimated scaling exponent is less than 3. If we take the geometric model at face value, then we'd expect the mass of a whole whale to simply be its volume times its density, or

where k_1 and k_2 scale the lengths of the two minor axes (its widths, front-to-back and left-to-right) relative to the major axis (its length L, nose-to-tail), and the trailing constant is the density of whale flesh (here, assumed to be the density of water) [8].

If the constants k_1 and k_2 are the same for all whales (the simplest geometric model), then we'd expect a cubic relation: M = (const.) x L^3. But, our measured exponent is less than 3. So, this implies that k_1 and k_2 cannot be constants, and must instead increase slightly with greater length L. Thus, as a whale gets longer, it gets wider less quickly than we expect from simple geometric scaling. But, that being said, we can't completely rule out the hypothesis that the scatter around the regression line is obscuring a beautifully simple cubic relation, since the 95% confidence intervals around the scaling exponent do actually include 3, but just barely: (2.64, 3.01).

So, the evidence is definitely in the direction of a geometric relationship between a whale's mass and length. That is, to a large extent, a blue whale, which can be 80 feet long (25m), is just a really(!) big bottlenose dolphin, which are usually only 9 feet long (2.9m). That being said, the support for the most simplistic model, i.e., strict geometric scaling with constant k_1 and k_2, is marginal. Instead, something slightly more complicated happens, with a whale's circumference growing more slowly than we'd expect. This kind of behavior could be caused by a mild pressure toward more hydrodynamic forms over the simple geometric forms, since the drag on a longer body should be slightly lower than the drag on a wider body.

Figuring out if that's really the case, though, is beyond me (since I don't know anything about hydrodynamics and drag) and the scope of the project. Instead, it's enough to be able to make a relatively accurate estimation of body mass M from an estimate of body length L. Plus, it's fun to know that big whales are mostly just scaled up versions of little ones.

More about why exactly I need estimates of body mass for will have to wait for another day.

Update 17 Nov. 2009: Changed the 95% CI to 3 significant digits; tip to Cosma.

Update 29 Nov. 2009: Carl Zimmer, one of my favorite science writers, has a nice little post about the way fin whales eat. (Fin whales are almost as large as blue whales, so presumably the mechanics are much the same for blue whales.) It's a fascinating piece, involving the physics of parachutes.

-----

[0] The pictures are, left-to-right, top-to-bottom: blue whale, bottlenose dolphin, humpback whale, sperm whale, beluga, narwhal, Amazon river dolphin, and killer whale.

[1] Actually, I mean Cetaceans, but to keep things simple, I'll refer to whales, dolphins, and porpoises as "whales".

[2] Thankfully, the project doesn't involve networks of whales... If that sounds exciting, try this: D. Lusseau et al. "The bottlenose dolphin community of Doubtful Sound features a large proportion of long-lasting associations. Can geographic isolation explain this unique trait?" Behavioral Ecology and Sociobiology 54(4): 396-405 (2003).

[3] For a terrestrial mammal, it's possible to estimate body size from the shape of its teeth. Basically, mammalian teeth (unlike reptilian teeth) are highly differentiated and certain aspects of their shape correlate strongly with body mass. So, if you happen to find the first molar of a long dead terrestrial mammal, there's a biologist somewhere out there who can tell you both how much it weighed and what it probably ate, even if the tooth is the only thing you have. Much of what we know about mammals from the Jurassic and Triassic, when dinosaurs were dominant, is derived from fossilized teeth rather than full skeletons.

[4] Silva, "Allometric scaling of body length: Elastic or geometric similarity in mammalian design." J. Mammology 79, 20-32 (1998).

[5] Economos, "Elastic and/or geometric similarity in mammalian design?" J. Theoretical Biology 103, 167-172 (1983).

[6] Of course, fully aquatic species may face other biomechanical constraints, due to the drag that water exerts on whales as they move.

[7] Actually, I did the analysis first and then stumbled across her paper, discovering that I'd been scooped more than a decade ago. Still, it's nice to know that this has been looked at before, and that similar conclusions were arrived at.

[8] Blubber has a slight positive buoyancy, and bone has a negative buoyancy, so this value should be pretty close to the true average density of a whale.

posted November 17, 2009 04:33 PM in Evolution | permalink | Comments (2)

January 07, 2009

Synthesizing biofuels

Carl Zimmer is one of my favorite science writers. And while I was in the Peruvian Amazon over the holidays, I enjoyed reading his book on parasites Parasite Rex. This way, if I managed to pick up malaria while I was there, at least I'd now know what a beautifully well adapted parasite I would have swimming in my veins.

But this post isn't about parasites, or even about Carl Zimmer. It's about hijacking bacteria to do things we humans care about. Zimmer's most recent book Microcosm is about E. coli and the things that scientists have learned about how cells work (and thus all of biology). And one of the things we've learned is how to manipulate E. coli to manufacture proteins or other molecules desired by humans. For science, this kind of reprogramming is incredibly useful and allows a skilled scientist to probe the inner workings of the cell even more deeply. This potential helped the idea gain the label "breakthrough of the year" in the last issue in 2008 of Science magazine.

This idea of engineering bacteria to do useful things for humanity, of course, is not a new one. I first encountered it almost 20 years ago in John Brunner's 1968 novel Stand on Zanzibar in a scene where a secret agent, standing over the inflatable rubber raft he just used to surreptitiously enter a foreign country, empties a vial of bacterium onto the raft that is specially engineering to eat the rubber. As visions of the future go, this kind of vision, in which humans use biology to perform magic-like feats, is in stark contrast to the future envisioned by Isaac Asimov's short stories on robots. Asimov foresaw a world dominated by, basically, advanced mechanical engineering. His robots were made of metal and other inorganic materials and humanity performed magic-like feats by understanding physics and computation.

Which is the more likely future? Both, I'd say, but in some respects, I think there's greater potential in the biological one and there are already people who are making that future a reality. Enter our favorite biological hacker Craig Venter. Zimmer writes in a piece (here) for Yale Environment 360 about Venter's efforts to new apply his interest in reprogramming bacteria in the direction of mass-producing alternative fuels.

The idea is to take ordinary bacteria and their cellular machinery for producing organic molecules (perhaps even simple hydrocarbons), and reprogram or augment them with new metabolic pathways that convert cheap and abundant organic material (sugar, sewage, whatever) into fuel like gasoline or diesel. That is, we would use most of the highly precise and relatively efficient machinery that evolution has produced to accomplish other tasks, and adapt it slightly for human purposes. The ideal result from this kind of technology would be to take photosynthetic organisms, such as algae, and have them suck CO2 and H2O out of the atmosphere to directly produce fuel. (As opposed to use other photosynthetic organisms like corn or sugarcane to produce an intermediate than can be eventually converted into fuel, as is the case for ethanol.) This would essentially use solar power to drive in reverse the chemical reactions that run the internal combustion engine, and would have effectively zero CO2 emissions. In fact, this direct approach to synthesizing biofuels using microbes is exactly what Venter is now doing.

The devil, of course, is in the details. If these microbe-based methods end up being anything like current methods of producing ethanol for fuel, they could end up being worse for the environment than fossil fuels. And there's also some concern about what might happen if these human-altered microbes escaped into the wild (just as there's concern about genetically modified food stocks jumping into wild populations). That being said, I doubt there's much to be worried about on that point. Wild populations have very different evolutionary pressures than domesticated stocks do, and evolution is likely to weed out human-inserted genes unless they're actually useful in the wild. For instance, cows are the product of a long chain of human-guided evolution and are completely dependent on humans for their survival now. If humans were to disappear, cows would not last long in the wild. Similarly, humans have, for a very long time now, already been using genetically-altered microbes to produce something we like, i.e., yogurt, without much danger to wild populations.

From microbe-based synthesis of useful materials, it's a short jump to microbe-mediated degradation of materials. Here, I recall an intriguing story about a high school student's science project that yielded some promising results for evolving (through human-guided selection) a bacteria that eats certain kinds of plastic. Maybe we're not actually that far from realizing certain parts of Brunner's vision for the future. Who knows what reprogramming will let us do next?

posted January 7, 2009 08:19 AM in Global Warming | permalink | Comments (0)

July 21, 2008

Evolution and Distribution of Species Body Size

One of the most conspicuous and most important characteristics of any organism is its size [1]: the size basically determines the type of physics it faces, i.e., what kind of world it has to live in. For instance, bacteria live in a very different world from insects, and insects live in a very different world from most mammals. In a bacterium's world, nanometers and micrometers are typical scales and some quantum effects are significant enough to drive some behaviors, but larger-scale effects like surface tension and gravity have a much more indirect effect. For most insects, typical scales are millimeter and centimeters, where quantum effects are negligible, but the surface tension of water matters tremendously. Similarly, for most mammals [2], a typical scale is more like a meter, and surface tension isn't as important as gravity and supporting your own body weight.

And yet despite these vast differences in the basic physical world that different types of species encounter, the distribution of body sizes within a taxonomic group, that is, the relative number of small, medium and large species, seems basically the same regardless of whether we're talking about insects, fish, birds or mammals: a few species in a given group are very small (about 2 grams for mammals), most species are slightly larger (between 20 and 80 grams for mammals), but some species are much (much!) larger (like elephants, which weigh over 1,000,000 times more than the smallest mammal). The ubiquity of this distribution has intrigued biologists since they first began to assemble large data sets in the second-half of the 20th century.

Many ideas have been suggested about what might cause this particular, highly asymmetric distribution, and they basically group into two kinds of theories: optimal body-size and diffusion. My interest in answering this question began last summer, partly as a result of some conversations with Alison Boyer in another context. Happily, the results of this project were published in Science last week [3] and basically show that the diffusion explanation is, when fossil data is taken in account, really quite good. (I won't go into the optimal body-size theories here; suffice to say that it's not as popular a theory as the diffusion explanation.) At its most basic, the paper shows that, while there are many factors that influence whether a species gets bigger or smaller as it evolves over long periods of time, their combined influence can be modeled as a simple random walk [4]. For mammals, the diffusion process is, surprisingly I think, not completely agnostic about the current size of a species. That is, although a species experiences many different pressures to get bigger or smaller, the combined pressure typically favors getting a little bigger (but not always). The result of this slight bias toward larger sizes is that descendent species are, on average, 4% larger than their ancestors.

But, the diffusion itself is not completely free [5], and its limitations turn out to be what cause the relative frequencies of large and small species to be so asymmetric. On the low end of the scale, there are unique problems that small species face that make it hard to be small. For instance, in 1948, O. P. Pearson published a one-page paper in Science reporting work where he, basically, stuck a bunch of small mammals in an incubator and measured their oxygen (O2) consumption. What he discovered is that O2 consumption (a proxy for metabolic rate) goes through the roof near 2 grams, suggesting that (adult) mammals smaller than this size might not be able to find enough high-energy food to survive, and that, effectively, 2 grams is the lower limit on mammalian size [6]. On the upper end, there is an increasingly dire long-term risk of become extinct the bigger a species is. Empirical evidence, both from modern species experiencing stress (mainly from human-related sources) as well as fossil data, suggests that extinction seems to kill off larger species more quickly than smaller species, with the net result being that it's hard to be big, too.

Together, this hard lower-limit and soft upper-limit on the diffusion of species sizes shape distribution of species in an asymmetric way and create the distribution of species sizes we see today [7]. To test this hypothesis in a strong way, we first estimated the details of the diffusion model (such as the location of the lower limit and the strength of the diffusion process) from fossil data on about 1100 extinct mammals from North America that ranged from 100 million years ago to about 50,000 years ago. We then simulated about 60 million years of mammalian evolution (since dinosaurs died out), and discovered that the model produced almost exactly the size distribution of currently living mammals. Also, when we removed any piece of the model, the agreement with the data became significantly worse, suggesting that we really do need all three pieces: the lower limit, the size-dependent extinction risk, and the diffusion process. The only thing that wasn't necessary was, surprisingly, the bias toward slightly larger species in the diffusion itself [8], which I think most people thought was necessary to produce really big species like elephants.

Although this paper answers several questions about why the distribution of species body size is the way it is, there are several questions left unanswered, which I might try to work on a little in the future. In general, one exciting thing is that this model offers some possibilities for connecting macroevolutionary patterns, such as the distribution of species body sizes over evolutionary time, with ecological processes, such as the ones that make larger species become extinct more quickly than small species, in a relatively compact way. That gives me some comfort, since I'm sympathetic to the idea that there are reasons we see such distinct patterns in the aggregate behavior of biology, and that it's possible to understand something about them without having to understand the specific details of every species and every environment.

-----

[1] An organism's size is closely related, but not exactly the same as its mass. For mammals, their density is very close to that of water, but plants and insects, for instance, can be less or more dense than water, depending on the extent of specialized structures.

[2] The typical mammal species weights about 40 grams, which is the size of the Pacific rat. The smallest known mammal species are the Etruscan shrew and the bumblebee bat, both of whom weight about 2 grams. Surprisingly, there are several insect species that are larger, such as the titan beetle which is known to weigh roughly 35 grams as an adult. Amazingly, there are some other species that are larger still. Some evidence suggests that it is the oxygen concentration in the atmosphere that mainly limits the maximum size of insects. So, about 300 million years ago, when the atmospheric oxygen concentrations were much higher, it should be no surprise that the largest insects were also much larger.

[3] A. Clauset and D. H. Erwin, "The evolution and distribution of species body size." Science 321, 399 - 401 (2008).

[4] Actually, in the case of body size variation, the random walk is multiplicative meaning that changes to species size are more like the way your bank balance changes, in which size increases or decreases by some percentage, and less like the way a drunkard wanders, in which size changes by increasing or decreasing by roughly constant amounts (e.g., the length of the drunkard's stride).

[5] If it were a completely free process, with no limits on the upper or lower ends, then the distribution would be a lot more symmetric than it is, with just as many tiny species as enormous species. For instance, with mammals, an elephant weights about 10 million grams, and there are a couple of species in this range of size. A completely free process would thus also generate a species that weighed about 0.000001 grams. So, the fact that the real distribution is asymmetric implies that some constraints much exist.

[6] The point about adult size is actually an important one, because all mammals (indeed, all species) begin life much smaller. My understanding is that we don't really understand very well the differences between adult and juvenile metabolism, how juveniles get away with having a much higher metabolism than their adult counterparts, or what really changes metabolically as a juvenile becomes an adult. If we did, then I suspect we would have a better theoretical explanation for why adult metabolic rate seems to diverge at the lower end of the size spectrum.

[7] Actually, we see fewer large species today than we might have 10,000 - 50,000 years ago, because an increasing number of them have died out. The most recent population collapses are certainly due to human activities such as hunting, habitat destruction, pollution, etc., but even 10,000 years ago, there's some evidence that the disappearnace of the largest species was due to human activities. To control for this anthropic influence, we actually used data on mammal species from about 50,000 years ago as our proxy for the "natural" state.

[8] This bias is what's more popularly known as Cope's rule, the modern reformulation of Edward Drinker Cope's suggesting that species tend to get bigger over evolutionary time.

posted July 21, 2008 03:01 PM in Evolution | permalink | Comments (0)

May 23, 2008

Shaping up to be a good year

Yesterday I heard the good news that my first paper (with Doug Erwin) on biology and evolution was accepted at Science. Unlike my experience with publishing in Nature, the review process for this paper was fast and relatively painless. I think this was partly because the paper's topic, on the evolution of species body masses, is a relatively conventional one in paleobiology / evolutionary biology / ecology. In fact, people have been thinking about this topic for more than 100 years, going all the way back to E. D. Cope in 1887 who suggested that mammal species had an inherent tendency to become larger over evolutionary timescales (millions of years). This idea went through several reformulations as our understanding of evolution matured over the 20th century. From a modern perspective, we now know from fossil data that changes to how big a species is are not deterministic in the sense that they always get bigger (as Cope thought), but rather changes are stochastic, with both decreases and increases happening with great frequency. The tendency, however, for many kinds of species (including mammals and brachiopods) is that the increases slightly outnumber the decreases (a pattern called Cope's Rule), perhaps because of competitive or robustness advantages from increased size.

Anyway, there's a lot more to say on this topic, but I'll wait until the paper comes out to say it. In general, it's been a lot of fun learning about evolution and ecology, and I hope to do some more work in this area in the future.

posted May 23, 2008 08:20 AM in Self Referential | permalink | Comments (2)

June 28, 2007

Hacking microbiology

Two science news articles (here and here) about J. Craig Venter's efforts to hack the genome (or perhaps, more broadly, hacking microbiology) reminded me of a few other articles about his goals. The two articles I ran across today concern a rather cool experiment where scientists took the genome of one bacterium species (M. mycoides) and transplanted it into a closely related one (M. capricolum). The actual procedure by which they made the genome transfer seems rather inelegant, but the end result is that the donor genome replaced the recepient genome and was operating well enough that the recepient looked like the donor. (Science article is here.) As a proof of concept, this experiment is a nice demonstration that the cellular machinery of the the recepient species is similar enough to that of the donor that it can run the other's genomic program. But, whether or not this technique can be applied to other species is an open question. For instance, judging by the difficulties that research on cloning has encountered with simply transferring a nucleus of a cell into an unfertilized egg of the same species, it seems reasonable to expect that such whole-genome transfers won't be reprogramming arbitrary cells any time in the foreseeable future.

The other things I've been meaning to blog about are stories I ran across earlier this month, also relating to Dr. Venter's efforts to pioneer research on (and patents for) genomic manipulation. For instance, earlier this month Venter's group filed a patent on an "artificial organism" (patent application is here; coverage is here and here). Although the bacterium (derived from another cousin of the two mentioned above, called M. genitalium) is called an artificial organism (dubbed M. laboratorium), I think that gives Venter's group too much credit. Their artificial organism is really just a hobbled version of its parent species, where they removed many of the original genes that were not, apparently, always necessary for a bacterium's survival. From the way the science journalism reads though, you get the impression that Venter et al. have created a bacterium from scratch. I don't think we have either the technology or the scientific understanding of how life works to be able to do that yet, nor do I expect to see it for a long time. But, the idea of engineering bacteria to exhibit different traits (maybe useful traits, such as being able to metabolize some of the crap modern civilizations produce) is already a reality and I'm sure we'll see more work along these lines.

Finally, Venter gave a TED talk in 2005 about his trip to sample the DNA of the ocean at several spots around the world. This talk is actually more about the science (or more pointedly, about how little we know about the diversity of life, as expressed through genes) and less about his commercial interests. It appears that some of the research results from this trip have already appeared on PLoS Biology.

I think many people love to hate Venter, but you do have to give him credit for having enormous ambition, and for, in part, spurring the genomics revolution currently gripping microbiology. Perhaps like many scientists, I'm suspicious of his commercial interests and find the idea of patenting anything about a living organism to be a little absurd, but I also think we're fast approaching the day when we putting bacteria to work doing things that we currently do via complex (and often dirty) industrial processes will be an everyday thing.

posted June 28, 2007 04:13 PM in Things that go squish | permalink | Comments (1)

March 25, 2007

The kaleidoscope in our eyes

Long-time readers of this blog will remember that last summer I received a deluge of email from people taking the "reverse" colorblind test on my webpage. This happened because someone dugg the test, and a Dutch magazine featured it in their 'Net News' section. For those of you who haven't been wasting your time on this blog for quite that long, here's a brief history of the test:

In April of 2001, a close friend of mine, who is red-green colorblind, and I were discussing the differences in our subjective visual experiences. We realized that, in some situations, he could perceive subtle variations in luminosity that I could not. This got us thinking about whether we could design a "reverse" colorblindness test - one that he could pass because he is color blind, and one that I would fail because I am not. Our idea was that we could distract non-colorblind people with bright colors to keep them from noticing "hidden" information in subtle but systematic variations in luminosity.

Color blind is the name we give to people who are only dichromatic, rather than the trichromatic experience that 'normal' people have. This difference is most commonly caused by a genetic mutation that prevents the colorblind retina from producing more than two kinds of photosensitive pigment. As it turns out, most mammals are dichromatic, in roughly the same way that colorblind people are - that is, they have a short-wave pigment (around 400 nm) and a medium-wave pigment (around 500 nm), giving them one channel of color contrast. Humans, and some of our closest primate cousins, are unusual for being trichromatic. So, how did our ancestors shift from being di- to tri-chromatic? For many years, scientists have believed that the gene responsible for our sensitivity in the green part of the spectrum (530 nm) was accidentally duplicated and then diverged slightly, producing a second gene yielding sensitivity to slightly longer wavelengths (560 nm; this is the red-part of the spectrum. Amazingly, the red-pigment differs from the green by only three amino acids, which is somewhere between 3 and 6 mutations).

But, there's a problem with this theory. There's no reason a priori to expect that a mammal with dichromatic vision, who suddenly acquired sensitivity to a third kind of color, would be able to process this information to perceive that color as distinct from the other two. Rather, it might be the case that the animal just perceives this new range of color as being one of the existing color sensations, so, in the case of picking up a red-sensitive pigment, the animal might perceive reds as greens.

As it turns out, though, the mammalian retina and brain are extremely flexible, and in an experiment recently reported in Science, Jeremy Nathans, a neuroscientist at Johns Hopkins, and his colleagues show that a mouse (normally dichromatic, with one pigment being slightly sensitive to ultraviolet, and one being very close to our medium-wave, or green sensitivity) engineered to have the gene for human-style long-wave or red-color sensitivity can in fact perceive red as a distinct color from green. That is, the normally dichromatic retina and brain of the mouse have all the functionality necessary to behave in a trichromatic way. (The always-fascinating-to-read Carl Zimmer, and Nature News have their own takes on this story.)

So, given that a dichromatic retina and brain can perceive three colors if given a third pigment, and a trichromatic retina and brain fail gracefully if one pigment is removed, what is all that extra stuff (in particular, midget cells whose role is apparently to distinguish red and green) in the trichromatic retina and brain for? Presumably, enhanced dichromatic vision is not quite as good as natural trichromatic vision, and those extra neural circuits optimize something. Too bad these transgenic mice can't tell us about the new kaleidoscope in their eyes.

But, not all animals are dichromatic. Birds, reptiles and teleost fish are, in fact, tetrachromatic. Thus, after mammals branched off from these other species millions of years ago, they lost two of these pigments (or, opsins), perhaps during their nocturnal phase, where color vision is less functional. This variation suggests that, indeed, the reverse colorblind test is based on a reasonable hypothesis - trichromatic vision is not as sensitive to variation in luminosity as dichromatic vision is. But why might a deficient trichromatic system (retina + brain) would be more sensitive to luminal variation than a non-deficient one? Since a souped-up dichromatic system - the mouse experiment above - has most of the functionality of a true trichromatic system, perhaps it's not all that surprising that a deficient trichromatic system has most of the functionality of a true dichromatic system.

A general explanation for both phenomena would be that the learning algorithms of the brain and retina organize to extract the maximal amount of information from the light coming into the eye. If this happens to be from two kinds of color contrast, it optimizes toward taking more information from luminal variation. It seems like a small detail to show scientifically that a deficient trichromatic system is more sensitive to luminal variation than a true trichromatic system, but this would be an important step to understanding the learning algorithm that the brain uses to organize itself, developmentally, in response to visual stimulation. Is this information maximization principle the basis of how the brain is able to adapt to such different kinds of inputs?

G. H. Jacobs, G. A. Williams, H. Cahill and J. Nathans, "Emergence of Novel Color Vision in Mice Engineered to Express a Human Cone Photopigment", Science 315 1723 - 1725 (2007).

P. W. Lucas, et al, "Evolution and Function of Routine Trichromatic Vision in Primates", Evolution 57 (11), 2636 - 2643 (2003).

posted March 25, 2007 10:51 AM in Evolution | permalink | Comments (3)

August 30, 2006

Biological complexity: not what you think

I've long been skeptical of the idea that life forms can be linearly ordered in terms of complexity, with mammals (esp. humans) at the top and single-celled organisms at the bottom. Genomic research in the past decade has shown humans to be significantly less complex than we'd initially imagined, having only about 30,000 genes. Now, along comes the recently sequenced genome of the heat-loving bug T. thermophila, which inhabits places too hot for most other forms of life, showing that a mere single-celled organism has roughly 27,000 genes! What are all these genes for? For rapidly adapting to different environments - if a new carbon source appears in its environment, T. thermophila can rapidly shift its metabolic network to consume and process it. That is, T. thermophila seems to have optimized its adaptability via accumulating, and carrying around, a lot of extra genes. This suggests that it tends to inhabit highly variable environments [waves hands], where having those extra genes is ultimately quite useful for its survival. Another fascinating trick it's learned is that it's reproductive behavior shows evidence of a kind of genetic immune system, in which foreign (viral) DNA is excised before sexual reproduction.

From the abstract:

... the gene set is robust, with more than 27,000 predicted protein-coding genes, 15,000 of which have strong matches to genes in other organisms. The functional diversity encoded by these genes is substantial and reflects the complexity of processes required for a free-living, predatory, single-celled organism. This is highlighted by the abundance of lineage-specific duplications of genes with predicted roles in sensing and responding to environmental conditions (e.g., kinases), using diverse resources (e.g., proteases and transporters), and generating structural complexity (e.g., kinesins and dyneins). In contrast to the other lineages of alveolates (apicomplexans and dinoflagellates), no compelling evidence could be found for plastid-derived genes in the genome. [...] The combination of the genome sequence, the functional diversity encoded therein, and the presence of some pathways missing from other model organisms makes T. thermophila an ideal model for functional genomic studies to address biological, biomedical, and biotechnological questions of fundamental importance.

J. A. Eisen et al. Macronuclear Genome Sequence of the Ciliate Tetrahymena thermophila, a Model Eukaryote. PLOS Biology, 4(9), e286 (2006). [pdf]

Update Sept. 7: Jonathan Eisen, lead author on the paper, stops by to comment about the misleading name of T. thermophilia. He has his own blog over at Tree of Life, where he talks a little more about the significance of the work. Welcome Jonathan, and congrats on the great work!

posted August 30, 2006 02:20 PM in Evolution | permalink | Comments (3)

August 23, 2006

A skeptic's skeptic

Dr. Michael Shermer, a long-time columnist for Scientific American, and founder of Skeptics Society, is one of the people at the front-lines in the interface between science and pseudo-science. His careful critiques of popular misconceptions were one of my early introductions to the conflict between science and myth. For instance, it was in one of his columns that I learned of an excellent explanation of why smart people often believe really bizarre things: their intelligence makes them particularly good at rationalizing those crazy ideas. In one of his earlier articles, I even learned that Einstein once conducted a little experiment to see if time would seem to pass more quickly around a beautiful woman (his conclusion: it did). Salon is currently running a short, but good interview with him. Apparently, Dr. Shermer used to be a creationist.

What caused you to see the light about evolution?

Like most creationists, you just know what you read in creationist books. When you read them, it makes the theory of evolution sound completely idiotic. What moron could believe in this theory? When you actually take a class in the science of it, it's a completely different picture. That's also when I realized I enjoyed the company of scientists and science people much more than religious people and theologians. It was this exciting, open-ended, participatory process that I could be involved in. We're all in this search together, and there's an actual method to do it, and a community of people who practice it, and a way of determining whether something is true or not. I fell in love with that.

posted August 23, 2006 12:05 PM in Evolution | permalink | Comments (1)

April 15, 2006

Ken Miller on Intelligent Design

Ken Miller, a cell biologist at Brown University, gave a (roughly hour long) talk at Case Western University in January on his involvement in the Dover PA trial on the teaching of Intelligent Design in American public schools. The talk is accessible, intelligent and interesting. Dr. Miller is an excellent speaker and if you can hang-in there until the Q&A at the end, he'll make an somewhat scary connection between the former dominance of the Islamic world (the Caliphate) in scientific progress and its subsequent trend toward non-rational theocratic thinking, and the position of the United States (and perhaps all of Western civilization) relative to its own future.

posted April 15, 2006 05:30 PM in Evolution | permalink | Comments (0)

February 09, 2006

What intelligent design is really about

In the continuing saga of the topic, the Washington Post has an excellent (although a little lengthy) article (supplementary commentary) about the real issues underlaying the latest attack on evolution by creationists, a.k.a. intelligent designers. Quoting liberally,

If intelligent design advocates have generally been blind to the overwhelming evidence for evolution, scientists have generally been deaf to concerns about evolution's implications.

Or rather, as Russell Moore, a dean at the Southern Baptist Theological Seminary puts it in the article, "...most Americans fear a world in which everything is reduced to biology." It is a purely emotional argument for creationists, which is probably what makes it so difficult for them to understand the rational arguments of scientists. At its very root, creationism rebels against the idea of a world that is indifferent to their feelings and indifferent to their existence.

But, even Darwin struggled with this idea. In the end, he resolved the cognitive dissonance between his own piety and his deep understanding of biology by subscribing to the "blind watchmaker" point of view.

[Darwin] realized [his theory] was going to be controversial, but far from being anti-religious, ... Darwin saw evolution as evidence of an orderly, Christian God. While his findings contradicted literal interpretations of the Bible and the special place that human beings have in creation, Darwin believed he was showing something even more grand -- that God's hand was present in all living things... The machine [of natural selection], Darwin eventually concluded, was the way God brought complex life into existence.

(Emphasis mine.) The uncomfortable truth for those who wish for a personal God is that, by removing his active involvement in day-to-day affairs (i.e., God does not answer prayers), evolution makes the world less forgiving and less loving. It also makes it less cruel and less spiteful, as it lacks evil of the supernatural caliber. Evolution cuts away the black, the white and even the grey, leaving only the indifference of nature. This lack of higher meaning is exactly what creationists rebel against at a basal level.

So, without that higher (supernatural) meaning, without (supernatural) morality, what is mankind to do? As always, Richard Dawkins puts it succinctly, in his inimitable way.

Dawkins believes that, alone on Earth, human beings can rebel against the mechanistic indifference of nature. Understanding the pitiless ways of natural selection is precisely what can make humans moral, Dawkins said. It is human agency, human rationality and human law that can create a world more compassionate than nature, not a religious view that falsely sees the universe as fundamentally good and benevolent.

Isn't the ideal put forth in the American Constitution one of a secular civil society where we decide our own fate, we decide our own rules of behavior, and we decide what is moral and immoral? Perhaps the Christian creationists that wish for evolution, and all it represents, to be evicted from public education aren't so different from certain other factions that are hostile to secular civil society.

posted February 9, 2006 11:17 PM in Thinking Aloud | permalink | Comments (0)

January 30, 2006

Selecting morality

I've been musing a little more about Dr. Paul Bloom's article on the human tendency to believe in the supernatural. (See here for my last entry on this.) The question that's most lodged in my mind right now is thus, What if the only way to have intelligence like ours, i.e., intelligence that is capable of both rational (science) and irrational (art) creativity, is to have these two competing modules, the one that attributes agency to everything and the one that coldly computes the physical outcome of events? If this is true, then the ultimate goal of creating "intelligent" devices may have undesired side-effects. If futurists like Jeff Hawkins are right that an understanding of the algorithms that run the brain are within our grasp, then we may see these effects within our lifetime. Not only will your computer be able to tell when you're unhappy with it, you may need to intuit when it's unhappy with you! (Perhaps because you ignored it for several days while you tended to your Zen rock garden, or perhaps you left it behind while you went to the beach.)

This is a somewhat entertaining line of thought, with lots of unpleasant implications for our productivity (imagine having to not only keep track of the social relationships of your human friends, but also of all the electronic devices in your house). But, Bloom's discussion raises another interesting question. If our social brain evolved to manage the burgeoning collection of inter-personal and power relationships in our increasingly social existence, and if our social brain is a key part of our ability to "think" and imagine and understand the world, then perhaps it is hard-wired with certain moralistic beliefs. A popular line of argument between theists and atheists is the question of, If one does not get one's sense of morality from God, what is to stop everyone from doing exactly as they please, regardless of its consequences? The obligatory examples of such immoral (amoral?) behavior are rape and murder - that is, if I don't have in me the fear of God and his eternal wrath, what's to stop me from running out in the street and killing the first person I see?

Perhaps surprisingly, as the philosopher Daniel Dennett (Tufts University) mentions in this half-interview, half-survey article from The Boston Globe, being religious doesn't seem to have any impact on a person's tendency to do clearly immoral things that will get you thrown in jail. In fact, many of those whom are most vocal about morality (e.g., Pat Robertson) are themselves cravenly immoral, by any measure of the word (a detailed list of Robertson's crimes; a brief but humorous summary of them (scroll to bottom; note picture)).

Richard Dawkins, the well-known British ethologist and atheist, recently aired a two-part documentary, of his creation, on the BBC's Channel 4 attempting to explore exactly this question. (Audio portion for both episodes available here and here, courtesy of onegoodmove.org.) He first posits that faith is the antithesis of rationality - a somewhat incendiary assertion on the face of it. However, consider that faith is, by definition, the belief in something for which there is no evidence or for which there is evidence against, while rationally held beliefs are those based on evidence and evidence alone. In my mind, such a distinction is rather important for those with any interest in metaphysics, theology or that nebulous term, spirituality. Dawkins' argument goes very much along the lines of Stephen Weinberg, Nobel Prize in physics, who once said "Religion is an insult to human dignity - without it you'd have good people doing good things and evil people doing evil things. But for good people to do evil things it takes religion." However, Dawkins' documentary points at a rather more fundamental question, Where does morality comes from if not from God, or the cultural institutions of a religion?

This question was recently, although perhaps indirectly, explored by Jessica Flack and her colleagues at the Santa Fe Institute; published in Nature last week (summary here). Generally, Flack et al. studied the importance of impartial policing, by authoritative members of a pigtailed macaque troupe, to the cohesion and general health of the troupe as a whole. Their discovery that all social behavior in the troupe suffers in the absence of these policemen shows that they serve the important role of regulating the self-interested behavior of individuals. That is, by arbitrating impartially among their fellows in conflicts, when there is no advantage or benefit to them for doing so, the policemen demonstrate an innate sense of a right and wrong that is greater than themselves.

There are two points to take home from this discussion. First, that humans are not so different from other social animals in that we need constant reminders of what is "moral" in order for society to function. But second, if "moral" behavior can come from the self-interested behavior of individuals in social groups, as is the case for the pigtailed macaque, then it needs no supernatural explanation. Morality can thus derive from nothing more than the natural implication of real consequences, to both ourselves and others, for certain kinds of behaviors, and the observation that those consequences are undesirable. At its heart, this is the same line of reasoning for religious systems of morality, except that the undesirable consequences are supernatural, e.g., burning in Hell, not getting to spend eternity with God, etc. But clearly, the pigtailed macaques can be moral without God and supernatural consequences, so why can't humans?

J. C. Flack, M. Girvan, F. B. M. de Waal and D. C. Krakauer, "Policing stabilizes construction of social niches in primates." Nature 439, 426 (2006).

Update, Feb. 6th: In the New York Times today, there is an article about how quickly a person's moral compass can shift when certain unpalatable acts are sure to be done (by that person) in the near future, e.g., being employed as a part of the State capital punishment team, but being (morally) opposed to the death penalty. This reminds me of the Milgram experiment (no, not that one), which showed that a person's moral compass could be broken simply by someone with authority pushing it. In the NYTimes article, Prof. Bandura (Psychology, Stanford) puts it thus:

It's in our ability to selectively engage and disengage our moral standards, and it helps explain how people can be barbarically cruel in one moment and compassionate the next.

(Emphasis mine.) With a person's morality being so flexible, it's no wonder that constant reminders (i.e., policing) are needed to keep us behaving in a way that preserves civil society. Or, to use the terms theists prefer, it is policing, and the implicit terrestrial threat embodied by it, that keeps us from running out in the street and doing profane acts without a care.

Update, Feb. 8th: Salon.com has an interview with Prof. Dennet of Tufts University, a strong advocate of clinging to rationality in the face of the dangerous idea that everything that is "religious" in its nature is, by definition, off-limits to rational inquiry. Given that certain segments of society are trying (and succeeding) to expand the range of things that fall into that domain, Dennet is an encouragingly clear-headed voice. Also, when asked how we will know right from wrong without a religious base of morals, he answers that we will do as we have always done, and make our own rules for our behavior.

posted January 30, 2006 02:43 AM in Thinking Aloud | permalink | Comments (3)

January 05, 2006

Is God an accident?

This is the question that Dr. Paul Bloom, professor of psychology at Yale, explores in a fascinating exposé in The Atlantic Monthly on the origins of religion, as evidence by a belief in supernatural beings through a neurological basis of our ability to attritute agency. He begins,

Despite the vast number of religions, nearly everyone in the world believes in the same things: the existence of a soul, an afterlife, miracles, and the divine creation of the universe. Recently psychologists doing research on the minds of infants have discovered two related facts that may account for this phenomenon. One: human beings come into the world with a predisposition to believe in supernatural phenomena. And two: this predisposition is an incidental by-product of cognitive functioning gone awry. Which leads to the question ...

The question being, of course, whether the nearly universal belief in these things is an accident of evolution optimizing brain-function for something else entirely.

Belief in the supernatural is an overly dramatic way to put the more prosaic idea that we see agency (willful acts, as in, free will) where none exists. That is, consider the extreme ease with which we anthropomorphize inanimate objects like the Moon ("O, swear not by the moon, the fickle moon, the inconstant moon, that monthly changes in her circle orb, Lest that thy love prove likewise variable." Shakespeare Romeo and Juliet 2:ii), complex objects like our computers (intentionally confounding us, colluding to ruin our job or romantic prospects, etc.), and living creatures whom we view as little more than robots ("smart bacteria"). Bloom's consideration of the question of why is this innate tendency apparently universal among humans is a fascinating exploration of both evolution, human behavior and our pathologies. At the heart of his story arc, he considers whether easy attribution of agency provides some other useful ability in terms of natural selection. In short, he concludes that yes, our brain is hardwired to see intention and agency where none exists because viewing the world through this lens made (makes) it easier for us to manage our social connections and responsibilities, and the social consequences of our actions. For instance, consider a newborn - Bloom desribes experiments that show that

when twelve-month-olds see one object chasing another, they seem to understand that it really is chasing, with the goal of catching; they expect the chaser to continue its pursuit along the most direct path, and are surprised when it does otherwise.

But more generally,

Understanding of the physical world and understanding of the social world can be seen as akin to two distinct computers in a baby's brain, running separate programs and performing separate tasks. The understandings develop at different rates: the social one emerges somewhat later than the physical one. They evolved at different points in our prehistory; our physical understanding is shared by many species, whereas our social understanding is a relatively recent adaptation, and in some regards might be uniquely human.

This doesn't directly resolve the problem of liberal attribution of agency, which is the foundation of a belief in supernatural beings and forces, but Bloom resolves this by pointing out that because these two modes of thinking evolved separately and apparently function independently, we essentially view people (whose agency is understood by our "social brain") as being fundamentally different from objects (whose behavior is understood by our "physics brain"). This distinction makes it possible for us to envision "soulless bodies and bodiless souls", e.g., zombies and ghosts. With this in mind, certain recurrent themes in popular culture become eminently unsurprising.

So it seems that we are all dualists by default, a position that our everyday experience of consciousness only reinforces. Says Bloom, "We don't feel that we are our bodies. Rather, we feel that we occupy them, we possess them, we own them." The problem of having two modes of thinking about the world is only exacerbated by the real world's complexity, i.e., is a dog's behavior best understood with the physics brain or the social brain?, is a computer's behavior best understood with... you get the idea. In fact, it seems that you could argue quite convincingly that much of modern human thought (e.g., Hobbes, Locke, Marx and Smith) has been an exploration of the tension between these modes; Hobbes in particular sought a physical explanation of social organization. This also points out, to some degree, why it is so difficult for humans to be rational beings, i.e., there is a fundamental irrationality in the way we view the world that is difficult to first be aware of, and then to manage.

Education, or more specifically a training in scientific principles, can be viewed as a conditioning regiment that encourages the active management of the social brain's tendency to attribute agency. For instance, I suspect that the best scientists use their social mode of thinking when analyzing the interaction of various forces and bodies to make the great leaps of intuition that yield true steps forward in scientific understanding. That is, the irrationality of the two modes of thinking can, if engaged properly, be harnessed to extend the domain of rationality. There is certainly a great many suggestive anecdotes for this idea, and it suggests that if we ever want computers to truly solve problems the way humans do (as opposed to simply engaging in statistical melee), they will need to learn how to be more irrational, but in a careful way. I certainly wouldn't want my laptop to suddenly become superstitious about say, being plugged into the Internet!

posted January 5, 2006 04:50 PM in Scientifically Speaking | permalink | Comments (0)

May 14, 2005

The utility of irrationality

I have long been a proponent of rationality, yet this important mode of thinking is not the most natural for the human brain. It is our irrationality that distinguishes us from purely computational beings. Were we perfectly rational thinkers, there would be no impulse buys, no procrastination, no pleasant diversions and no megalomaniacal dictators. Indeed, being perfectly rational is so far from a good approximation of how humans think, it's laughable that economists ever considered it a reasonable model for human economic behavior (neoclassical microeconomics assumed this, although lately ideas are becoming more reasonable).

Perfect rationality, or the assumption that someone will always follow the most rational choice given the available information, is at least part of what makes it inherently difficult for computers to solve certain kinds of tasks in the complex world we inhabit (e.g., driving cars). That is, in order to make an immediate decision, when you have wholly insufficient knowledge about past, present and future, you need something else to drive you toward a particular solution. For humans, these driving forces are emotions, bodily needs and a fundamental failure to be completely rational, and they almost always tip the balance of indecision toward some action. Yet, irrationality serves a greater purpose than simply helping us to quickly make up our minds. It is also what gives us the visceral pleasures of art, music and relaxing afternoons in the park. The particularly pathological ways in which we are irrational are what makes us humans, rather than something else. Perhaps, if we ever encounter an extraterrestrial culture or learn to communicate with dolphins, we will, as a species, come to appreciate the origins of our uniqueness by comparing our irrationalities with theirs.

Being irrational seems to be deeply rooted in the way we operate in the real world. I recall a particularly interesting case study from my freshman psychology course at Swarthmore College: a successful financial investor had a brain lesion on the structure of the brain that is associated with emotion. The removal of this structure resulted in a perfectly normal man who happened to also be horrible at investing. Why? Apparently, because the brain normally stores a great deal of information about past decisions in the form of emotional associations, previous bad investments recalled a subconscious negative emotional response when jogged by similar characteristics of a present situation (and vice versa). Emotion, then, is a fundamental tool for representing the past, i.e., it is the basis of memory, and, as such, is both irrational and mutable. In fact, I could spend the rest of the entry musing on the utility of irrationality and its functional role in the brain (e.g., creativity in young songbirds). However, what is more interesting to me at this moment is the observation that we are first and foremost irrational beings, and only secondarily rational ones. Indeed, being rational is so difficult that it requires a particularly painful kind of conditioning in order to draw it out of the mental darkness that normally obscures it. That is, it requires education that emphasizes the principles of rational inquiry, skepticism and empirical validation. Sadly, I find none of these to be taught with much reliability in undergraduate Computer Science education (a topic about which I will likely blog in the future).

This month's Scientific American "Skeptic" column treats just this topic: the difficulty of being rational. In his usual concise yet edifying style, Shermer describes the tendency of humans to look for patterns in the tidal waves of information constantly washing over us, and that although it is completely natural for the human brain, evolved for this very purpose, to discover correlations in that information, it takes mental rigor to distinguish the true correlations from the false:

We evolved as a social primate species whose language ability facilitated the exchange of such association anecdotes. The problem is that although true pattern recognition helps us survive, false pattern recognition does not necessarily get us killed, and so the overall phenomenon has endured the winnowing process of natural selection. [...] Anecdotal thinking comes naturally; science requires training.

Thinking rationally requires practice, disciplined caution and a willingness to admit to being wrong. These are the things that do not come naturally to us. The human brain is so powerfully engineered to discover correlations and believe in their truth, that, for instance, even after years of rigorous training in physics, undergraduates routinely still believe their eyes and youthful assumptions about the conservation of momentum, over what their expensive college education has taught them (one of my fellow Haverford graduates Howard Glasser '00 studied exactly this topic for his honors thesis). That is, we are more likely to trust our intuition than to trust textbooks. The dangers of this kind of behavior taken to the extreme are, unfortunately, well documented.

Yet, despite this hard line against irrationality and our predilection toward finding false correlations in the world, this behavior has a utilitarian purpose beyond those described above; one that is completely determined by the particular characteristics and idiosyncrasies of being human and has implications for the creative process that is Science. For instance, tarot cards, astrology and other metaphysical phenomenon (about which I've blogged before) may completely fail the test of scientific validation for predicting the future, yet they serve the utilitarian purpose of stimulating our minds to be introspective. These devices are designed to engage the brain's pattern recognition centers, encouraging you to think about the prediction's meaning in your life rather than thinking about it objectively. Indeed, this is their only value: with so much information, both about self and others, to consider at each moment and in each decision that must be made, the utility of any such device is in focusing on interesting aspects which have meaning to the considerer.

Naturally, one might use this argument to justify a wide variety of completely irrational behavior, and indeed, anything that stimulates the observer in ways that go beyond their normal modes of thinking has some utility. However, the danger in this line of argument lies in confusing the tool with the mechanism; tools are merely descriptive, while mechanisms have explanatory power. This is the fundamental difference, for instance, between physics and statistics. The former is the pursuit of natural mechanisms that explain the existence of the real structure and regularity observed by the latter; both are essential elements of scientific inquiry. As such, Irrationality, the jester which produces an incessant and uncountable number of interesting correlations, provides the material through which, wielding the scepter of empirical validation according to the writ of scientific inquiry, Rationality sorts in an effort to find Truth. Without the one, the other is unfocused and mired in detail, while without the other, the one is frivolous and false.

posted May 14, 2005 06:07 AM in Thinking Aloud | permalink | Comments (0)

April 30, 2005

Dawkins and Darwin and Zebra Finches

As if it weren't already painfully obvious from my previous posts, let me make it more obvious now:

Salon.com has an excellent and entertaining interview with the indomitable Richard Dawkins. I've contemplated picking up several of his books (e.g., The Selfish Gene, and The Blind Watchmaker), but have not ever quite gotten around to it. Dawkins speaks a little about his new book, sure to inflame more hatred among religious bigots, and the trend of human society toward enlightenment. (Which I'm not so confident about, these days. Dawkins does make the point that it's largely the U.S. that's having trouble keeping both feet on the road to enlightenment.)

In a similar vein, science write Carl Zimmer (NYTimes, etc.) keeps a well-written blog called The Loom in which he discusses the ongoing battle between the forces of rationality and the forces of ignorance. A particularly excellent piece of his writing concerns the question of gaps in the fossil record and how the immune system provides a wealth of evidence for evolution. Late last year, this research article appeared in the Proceedings of the National Academy of Science, which is the article which Zimmer discusses.

Finally, in my continuing facination with the things that bird brains do, scientists at MIT recently discovered that a small piece of the bird brain (in this case, the very agreeable zebra finch) helps young songbirds learn their species' songs by regularly jolting their understanding of the song pattern so as to keep it from settling down to quickly (for the physicists, this sounds oddly like simulated annealing, does it not?). That is, the jolts keep the young bird brain creative and trying new ways to imitate the elders. This reminds me of a paper I wrote for my statistical mechanics course at Haverford in which I learned that spin-glass models of recurrent neural networks with Hebbian learning require some base level of noise in order to function properly (and not settle into a glassy state with fixed domain boundaries). Perhaps the reason we have greater difficulty learning new things as we get older is because the level of mental noise decreases with time?

posted April 30, 2005 04:15 AM in Obsession with birds | permalink | Comments (0)